Definition and Meaning

Bootstrapping autoregressions with conditional heteroskedasticity involves applying statistical methods to time series data, particularly where variability or volatility changes over time. This is relevant in macroeconomic and financial datasets, where the assumption of consistent error variance does not hold. Traditional bootstrap methods fall short as they presume independently and identically distributed (i.i.d.) errors, a limitation that bootstrapping autoregressions seeks to overcome by accommodating conditional heteroskedasticity.

How to Use Bootstrapping Autoregressions with Conditional Methods

Using these methods involves selecting the appropriate form of bootstrapping that can effectively handle the characteristics of your dataset. You might choose:

- Fixed-Design Wild Bootstrap: Used when residuals might show erratic behavior under typical sampling.

- Recursive-Design Wild Bootstrap: Suitable where the data is highly sequential and dependent on prior values.

- Pairwise Bootstrap: Effective for smaller samples where traditional techniques fail due to limited observations.

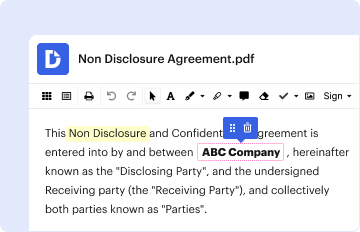

Steps to Complete the Bootstrapping Process

- Identify Data Characteristics: Understand the level of heteroskedasticity in your data.

- Select Appropriate Bootstrap Method: Depending on data nature, choose between fixed-design, recursive-design, or pairwise methods.

- Prepare Data: Clean and structure your data, ensuring it’s formatted correctly for analysis.

- Run Bootstrap Analysis: Apply the chosen bootstrap method to generate multiple resampled datasets.

- Interpret Results: Analyze the outcome considering the model’s fit and heteroskedasticity adjustments.

Why Implement Bootstrapping for Autoregressions

The implementation of bootstrapping methods is crucial to achieve accurate and reliable statistical inference in models where errors are not consistent. It improves the robustness of your econometric analysis by simulating the distribution of test statistics under realistic data conditions, providing insights that conventional assumptions might miss.

Key Elements of Bootstrapping Applications

- Modelling Uncertainty: Provides a more realistic estimate of sampling variability.

- Parameter Estimation: Generates more accurate coefficient estimates by reducing bias.

- Confidence Intervals: More reliable intervals that account for potential heteroskedasticity.

Examples of Using Bootstrapping Autoregressions

- Macroeconomic Forecasting: In predicting GDP growth, bootstrapping can refine volatility estimates, improving forecast accuracy.

- Financial Risk Modeling: Helps in assessing the risk of assets by considering varying levels of market volatility.

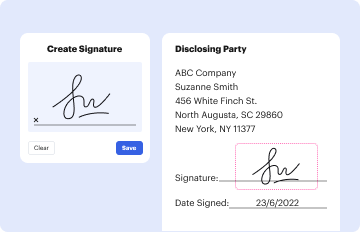

Important Terms and Definitions

- Heteroskedasticity: Variability of a variable that differs across levels of an independent variable.

- Auto-regression: A statistical model where current values are regressed on its past values.

- Bootstrap Method: A resampling technique to estimate statistics on a population by sampling a dataset with replacement.

State-Specific Rules and Considerations

While bootstrapping techniques are globally applicable, U.S.-centric economic conditions, such as regulatory changes or fiscal policies, often require modifications to standard bootstrap configurations. Differences in datasets pertaining to state-specific macroeconomic indicators can significantly influence the modeling outcomes.

Software Compatibility for Bootstrapping Techniques

Several statistical software options facilitate bootstrapping autoregressions, including:

- R: Offers packages like 'boot' and 'tsbootstrap' for time-series simulation.

- MATLAB: Provides extensive toolboxes for econometrics and statistical modeling.

- Python: Libraries such as 'statsmodels' and 'arch' support advanced applications in bootstrapping.

Versions and Alternatives

Bootstrapping evolves with methodological advancements. Alternatives such as Bayesian bootstrapping or parametric bootstrapping offer different computational benefits depending on complexity and data requirements.