Definition and Meaning of Average Redundancy in Shannon Code

The concept of average redundancy in the context of Shannon coding is pivotal in information theory. It refers to the difference between the actual average length of encoded messages and the theoretical minimum required by Shannon's entropy. This measure evaluates the efficiency of a coding scheme in minimizing excess data transmission. Claude Shannon's pioneering work laid the foundation for understanding data compression, emphasizing the importance of reducing redundancy while maintaining information integrity.

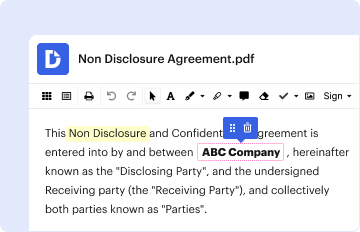

How to Use the (PDF) Average Redundancy of the Shannon Code for Markov

Utilizing the PDF document on average redundancy involves understanding key principles of Shannon Code as they apply to Markov sources. Users typically leverage this information for academic research, engineering applications, or optimizing communication systems. By analyzing these concepts, you can develop strategies to enhance data compression and decrease bandwidth usage. This document serves as a comprehensive guide for professionals and students aiming to explore the intricacies of coding theory within Markovian contexts.

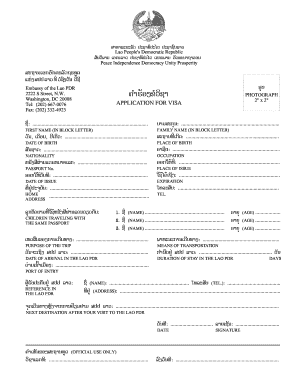

Obtaining the (PDF) Average Redundancy of the Shannon Code for Markov

To acquire the PDF on the average redundancy of the Shannon Code for Markov processes, interested individuals can access digital libraries, academic journals, or educational platforms. Certain university databases and professional organizations offer these documents as part of their online resources. Ensure that the source is reputable to guarantee the accuracy and relevance of the information for your research or application.

Steps to Complete the (PDF) Average Redundancy of the Shannon Code for Markov

- Understand the Basics: Familiarize yourself with information theory and key concepts related to Shannon Code and Markov processes.

- Access the Document: Obtain the PDF from reputable sources specializing in information and coding theory.

- Analyze the Content: Thoroughly read and interpret the document, focusing on the mathematical models and theoretical discussions.

- Apply the Knowledge: Implement the concepts derived from the PDF in practical scenarios, such as developing coding algorithms or optimizing data transmission in communication systems.

Why You Should Explore the Average Redundancy of the Shannon Code for Markov

Studying average redundancy in Shannon Code, particularly for Markov processes, offers valuable insights into efficient data transmission. This knowledge is crucial for professionals engaged in telecommunications, computer science, and data encryption fields. By understanding redundancy, you can design systems that are both effective and resource-efficient, thereby enhancing overall communication reliability and performance.

Important Terms Related to Shannon Code and Markov Processes

- Entropy: A measure of the uncertainty or randomness in a set of data, forming the basis for understanding redundancy.

- Markov Process: A stochastic process wherein the future state depends only on the current state, not on the sequence of events that preceded it.

- Redundancy: The extra bits used in a message over the theoretical minimum necessary for error-free communication as quantified by entropy.

- Coding Efficiency: A metric for evaluating how well a coding scheme represents data using the least amount of bits possible.

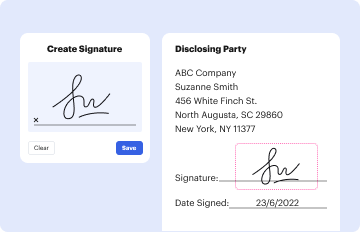

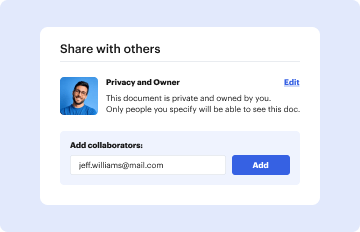

Legal and Compliance Considerations for the Shannon Code

While Shannon coding itself is a technical concept, its application can intersect with legal considerations, particularly in data privacy and intellectual property domains. Ensure compliance with relevant data protection laws and standards when applying Shannon coding to sensitive or proprietary information. The theoretical framework must be appropriately contextualized within legal regulatory environments, especially in data-centric industries.

Key Elements of the Shannon Code for Markov

- Source Coding: The process of determining the most efficient coding scheme to reduce redundancy.

- Channel Capacity: The measure of a channel's maximum error-free data transmission rate, which impacts redundancy calculations.

- Data Compression: Techniques used to minimize redundancy and reduce the volume of data without losing critical information.

- Probabilistic Models: Utilization of probability distributions in developing codes that account for the stochastic nature of data sources, like Markov models.

Examples of Using the Shannon Code for Markov Processes

Consider a telecommunications company that employs Shannon coding principles to achieve optimal data throughput. By analyzing communication patterns as a Markov process, they tailor their data compression strategies to reduce average redundancy, thus enhancing network efficiency. This model has applications in designing more intelligent data routing and compression systems, vital for minimizing latency and maximizing bandwidth utilization.

Software Compatibility for Shannon Code Analysis

Numerous professional tools and software, such as MATLAB or Python libraries (e.g., NumPy, SciPy), facilitate the analysis and implementation of Shannon coding concepts. These platforms offer functionalities tailored to processing large datasets and executing complex calculations necessary for understanding average redundancy in Markov processes.