Definition and Purpose of Quality in Statistics

Quality in statistics refers to the accuracy, reliability, and relevance of statistical data and methodologies. It involves ensuring that statistical data is collected, processed, and reported in a manner that is transparent, consistent, and verifiable. The primary purpose is to provide credible and usable data that informs decision-making, policy formulation, and academic research. High-quality statistics help organizations and individuals make informed decisions by presenting precise trends and insights.

How to Use the Quality in Statistics

To effectively use quality in statistics, it is essential to evaluate the data sources and methods applied in collecting statistical information. Users should assess the sampling methods, data collection techniques, and analytical processes for potential biases or errors. This evaluation ensures the robustness of conclusions drawn from the data. Applying statistical quality checks helps identify outliers and validate findings, leading to more reliable interpretations.

Key Elements of Quality in Statistics

Several key elements define quality in statistics:

- Accuracy: The closeness of estimates to true values.

- Reliability: Consistency of results over time under similar conditions.

- Relevance: Alignment of data with the needs of users.

- Timeliness: Accessibility of up-to-date data.

- Accessibility: Ease of obtaining and understanding statistical information.

Ensuring these elements are balanced is vital for maintaining the integrity of statistical outputs.

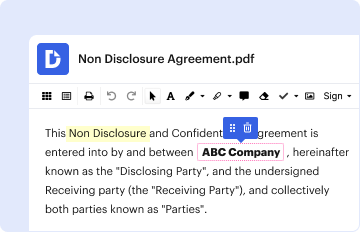

Steps to Ensure Quality in Statistics

Ensuring quality in statistics involves a series of systematic steps:

- Define Objectives: Clearly articulate the goals and intended use of the data.

- Design Methodology: Develop a robust sampling and data collection plan.

- Data Collection: Gather data using reliable sources and standardized procedures.

- Data Processing: Organize and clean data to eliminate inaccuracies.

- Analysis: Use appropriate statistical techniques and software to analyze data.

- Validation: Conduct quality checks to confirm data accuracy.

- Reporting: Present findings clearly, noting any limitations or assumptions.

Following these steps ensures reliable and high-quality statistical outputs.

Important Terms Related to Quality in Statistics

Understanding key terms is crucial for grasping quality in statistics:

- Bias: Systematic error that skews results.

- Variance: Measure of data dispersion and variability.

- Standard Deviation: Indicates the spread of data values.

- Confidence Interval: Range within which the true value is expected to lie.

- Significance Level: Probability threshold for rejecting a null hypothesis.

Familiarity with these terms helps interpret statistical data effectively, ensuring informed decision-making.

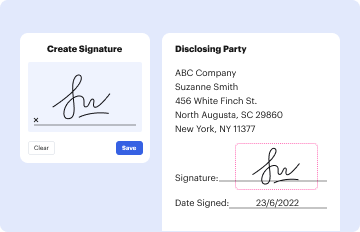

Legal Use of Quality in Statistics

Legal frameworks often require adherence to specific statistical standards to ensure the credibility of data used in policy-making and public dissemination. Complying with regulations like the Data Quality Act in the United States is essential for government agencies. This act mandates quality, objectivity, utility, and integrity in information disseminated by federal entities. Organizations must ensure statistical data meets these criteria to maintain legal compliance and public trust.

Examples of Using Quality in Statistics

Quality in statistics can be exemplified in various domains:

- Healthcare: Ensuring accurate data for disease prevalence and treatment efficacy.

- Economics: Utilizing reliable economic indicators for forecasting.

- Public Policy: Informing policy decisions with robust census data.

- Education: Evaluating educational programs through quality assessments.

Each scenario highlights the necessity of high-quality data to support effective outcomes and policy development.

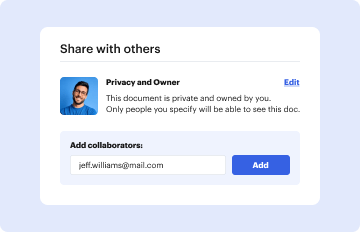

Software Compatibility and Quality in Statistics

Leveraging statistical software enhances the quality of statistical analyses by providing advanced tools for data collection, processing, and visualization. Compatibility with software like SPSS, SAS, or R ensures rigorous statistical testing and model validation. These platforms offer powerful graphical and analytical capabilities, supporting robust data analysis and contributing to improved statistical quality.

Harnessing these software tools enhances data validity and supports comprehensive statistical evaluation, reinforcing the value and credibility of statistical findings.