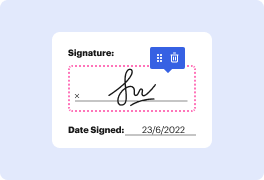

When your daily work consists of a lot of document editing, you already know that every file format requires its own approach and in some cases particular applications. Handling a seemingly simple rtf file can often grind the whole process to a halt, especially when you are trying to edit with inadequate tools. To prevent this sort of troubles, get an editor that can cover all your requirements regardless of the file extension and blot sentence in rtf with zero roadblocks.

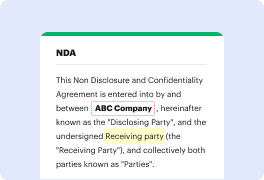

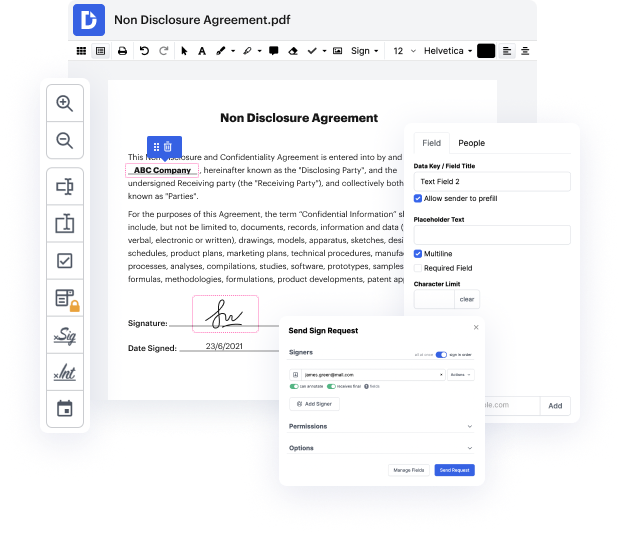

With DocHub, you will work with an editing multitool for any situation or file type. Reduce the time you used to devote to navigating your old software’s features and learn from our intuitive interface as you do the work. DocHub is a sleek online editing platform that handles all your file processing requirements for virtually any file, including rtf. Open it and go straight to productivity; no prior training or reading instructions is needed to reap the benefits DocHub brings to papers management processing. Start by taking a few moments to create your account now.

See improvements within your papers processing immediately after you open your DocHub account. Save time on editing with our single solution that can help you become more efficient with any file format with which you need to work.

hi welcome to the video were going to explore how we can use sentence transformers and sentence embeddings in nlp for semantic similarity applications now in in the video were going to have a quick recap on transformers and where they came from so were going to have a quick look at recurring neural networks and the attention mechanism and then were going to move on to trying to define you know what is the difference between a transformer and a sentence transformer and also understanding okay why are these embeddings that are produced by transformers or sentence transformers specifically so good and at the end were also going to go through how we can implement our own sentence transformers in python as well so i think we should just jump straight into it [Applause] before we dive into sentence transformers i think it would make a lot of sense if we piece together where transformers come from with the intention of trying to understand why we use transformers now rather than some ot

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more