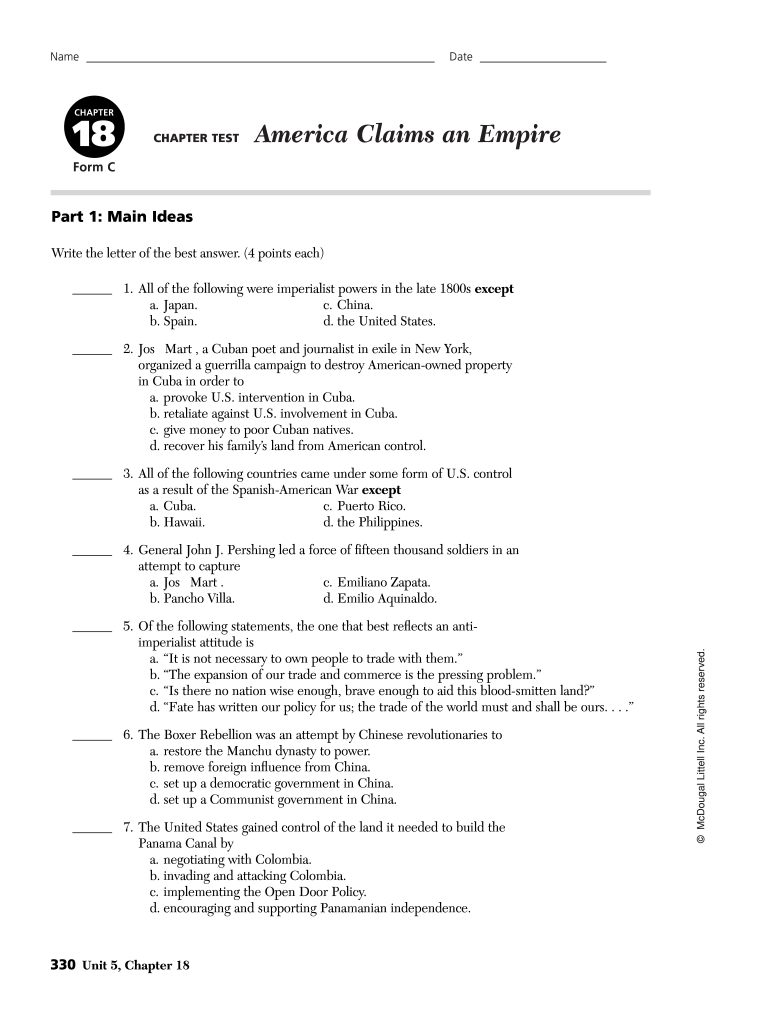

Understanding the Chapter 18 America Claims an Empire Answer Key PDF

The chapter test titled "America Claims an Empire" serves as an essential resource for assessing knowledge on U.S. imperialism during the late 1800s and early 1900s. This answer key PDF provides insights into U.S. expansionism, including key events and policies such as the Spanish-American War, the Boxer Rebellion, and the construction of the Panama Canal. By utilizing this document, educators can efficiently evaluate student understanding of critical concepts, figures, and events relating to America’s imperialist actions.

Overview of the Document's Purpose

The answer key is designed to assist both teachers and students by providing accurate responses to various assessment questions found in the chapter test. It includes:

- Multiple-choice answers that cover significant historical figures and events.

- Map skills questions, which enhance geographical literacy related to American territories gained during this period.

- Political cartoon interpretations, promoting critical thinking by analyzing visual sources related to the theme of imperialism.

Critical Themes in American Imperialism

American imperialism emerged from several motivating factors, which can be categorized as follows:

-

Economic Interests:

- The need for new markets for American goods after the Civil War.

- Access to raw materials from territories that would bolster the U.S. economy.

-

Military Strategy:

- Expansion of naval power, as advocated by figures like Alfred Thayer Mahan, aimed at establishing a strong military presence globally.

-

Cultural Rationalizations:

- The belief in Anglo-Saxon superiority and the concept of manifest destiny, which justified expansionist policies as a means of uplifting 'lesser' societies.

Key Examples from the Chapter

- Spanish-American War: This conflict marked a significant turning point in U.S. foreign policy, transitioning the nation into a global power with territorial acquisitions such as Puerto Rico, Guam, and the Philippines.

- Queen Liliuokalani's Goals vs. American Imperialism: The chapter discusses how her efforts to restore Hawaii's sovereignty conflicted with American imperialist desires, illustrating the struggle between native autonomy and imperial ambitions.

How to Use the Answer Key

To effectively use the chapter 18 America Claims an Empire answer key PDF, consider the following steps:

- Locate Relevant Questions: Identify the corresponding questions in the chapter test that you wish to evaluate.

- Refer to the Correct Answers: Match the questions with the answers provided in the answer key to facilitate grading and understanding.

- Engage in Discussion: Use the questions and answers as a foundation for class discussions on the implications of U.S. imperialism.

Importance of the Content

This answer key serves a dual purpose—it not only aids in educational assessments but also prompts deeper discussions on moral and ethical implications of U.S. policies during this era. Moreover, it highlights the contrasts between American ideals and the realities of imperialism, encouraging students to critically engage with historical content.

Legal and Ethical Considerations

- Copyright Compliance: Ensure that usage of the answer key aligns with copyright guidelines, particularly in educational settings where materials may be shared.

- Encouragement of Original Thought: While the answer key provides accurate responses, students should be encouraged to develop their own interpretations and arguments regarding imperialist policies.

Conclusion on Learning with the Answer Key

Utilizing the chapter 18 America Claims an Empire answer key PDF within an educational framework enriches students' understanding of American history. By engaging with the material through various methodologies, including individual assessments, group discussions, and critical analysis of historical events, educators can foster a learning environment that encourages inquiry and reflection on America's global role throughout history.