Definition and Meaning

The "A Structure-Driven Yield-Aware Web Form Crawler - CiteSeerX - ideals illinois" is an innovative tool aimed at efficiently collecting query forms from deep Web databases. This type of crawler utilizes a structure-driven framework that identifies and extracts data behind hidden website interfaces, dramatically improving data harvesting efficiency and reach compared to traditional page-based crawling methods.

How to Use the Web Form Crawler

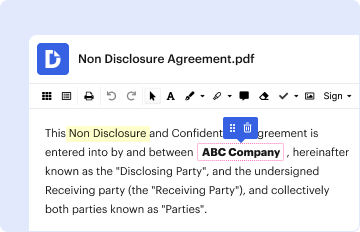

Using the web form crawler involves a dual-component system: a Site Finder and a Form Finder. The Site Finder locates potential website entries, while the Form Finder identifies and retrieves query forms from those sites. Users need to initiate the crawling process by setting parameters that guide the crawler to specific domains or types of data needed, streamlining the form collection process.

Steps to Complete Web Form Crawling

- Initialize the Site Finder: Input the domain or specific URLs where queries need to be collected.

- Configure Form Finder Parameters: Define criteria for the types of query forms to be captured.

- Run Crawling Operation: Activate the crawler, and it will automatically navigate the specified web pages.

- Data Extraction: The crawler fetches and compiles forms into a usable dataset.

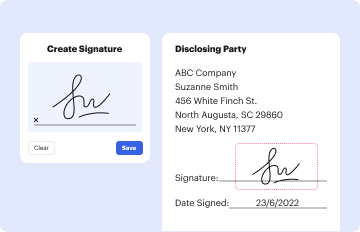

- Review Results: Analyze the collected data for accuracy and completeness.

Key Elements of the Web Form Crawler

- Site Finder Component: Detects entry points on the web for data collection.

- Form Finder Component: Locates and extracts query forms within accessed sites.

- Structure-Driven Framework: Utilizes webpage structure to improve form yield rates.

- Data Output: Provides harvested forms in organized datasets for further use.

Benefits of a Structure-Driven Framework

The primary benefit of this framework is its ability to focus on the structural elements of websites to locate query forms more effectively than conventional approaches. This structure-driven methodology leads to significantly increased harvest rates and expands access to the wealth of data found in deep Web queries.

Software Compatibility and Integration

The web form crawler can integrate with various data processing and analysis tools, enhancing its utility for researchers and developers. While not specifically designed for software like TurboTax or QuickBooks, data extracted by the form crawler can be incorporated into any system that handles data import and export.

Important Terms Related to the Crawler

- Deep Web: The portion of the internet not indexed by traditional search engines.

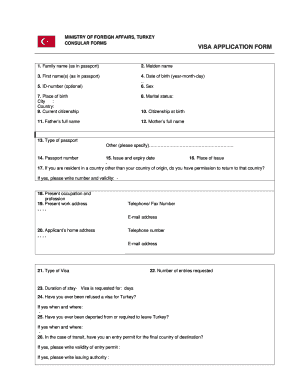

- Query Forms: Online forms used for extracting data from searchable databases.

- Harvest Rate: A measure of the effectiveness of data collection efforts.

- Locality of Query Forms: The tendency of forms to cluster in certain parts of the web structure.

Examples of Usage

Consider a research institution looking to gather information on academic publications across various universities. By employing the web form crawler, the institution can efficiently access forms on university websites where research data is typically submitted, thus bypassing standard search limitations and gaining deeper insights into academic trends.

Application Process and Approval Time

As the web form crawler is a software tool, there are no specific applications or approval times associated with its use. However, it's critical for users to ensure that their use complies with legal standards for web scraping and data collection, especially concerning privacy laws and website terms of service.

Variants and Alternatives

There are alternative tools and methods for crawling the deep Web, such as general search engine crawlers or specific tailored solutions for different data types. However, these may not offer the same structure-driven efficiency or yield-awareness found in this particular web form crawler design.

By adhering to these guidelines, users can maximize the utility and effectiveness of the "A Structure-Driven Yield-Aware Web Form Crawler - CiteSeerX - ideals illinois" in their data collection and research initiatives.