Definition and Meaning

"Edit and Imputation - vrdc cornell" refers to specialized techniques used in the realm of statistical data analysis, particularly in addressing missing data within surveys and censuses. These procedures are essential for ensuring the accuracy and credibility of datasets by identifying inconsistencies, correcting errors, and imputing missing values. This approach allows researchers and data analysts to maintain the integrity of their datasets, ensuring that conclusions drawn from the data are based on comprehensive and accurate information.

Core Concepts

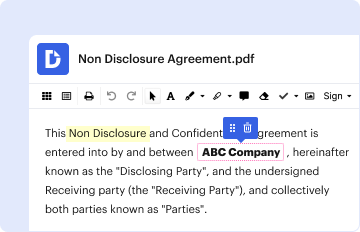

- Edits: These are logical checks implemented to identify and correct errors or anomalies in data. These may involve cross-validating entries against a set of predefined rules.

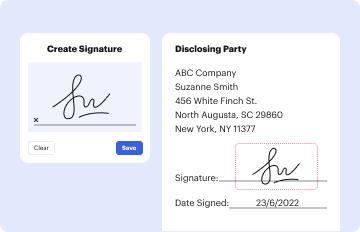

- Imputation: This process involves estimating and replacing missing or inconsistent data points with plausible values based on available information.

How to Use the Edit and Imputation - vrdc cornell

Implementing these procedures requires a systematic approach tailored to the specific requirements of the dataset or research framework. Here's how to navigate the process effectively:

- Identify Missing Data: Begin by scanning the dataset for missing values and inconsistencies.

- Select Appropriate Imputation Techniques: Depending on the nature and extent of the missing data, choose methods such as mean substitution, regression imputation, or multiple imputation to fill in gaps.

- Apply Edits: Implement formal checks using the VRDC framework to detect and rectify any logical inconsistencies.

- Validate Results: Post-imputation, re-evaluate the dataset to ensure accuracy and reliability, adjusting methodologies if necessary.

Steps to Complete the Edit and Imputation - vrdc cornell

Ensuring a streamlined process involves several steps:

- Preparation and Planning: Assemble a team with expertise in statistical editing and prepare a detailed plan, outlining the scope of work.

- Data Collection: Gather all necessary datasets from various sources, ensuring data accuracy and completeness.

- Initial Data Assessment: Conduct a thorough assessment to highlight areas requiring editing and imputation.

- Application of Techniques: Deploy the appropriate edit and imputation procedures, continuously monitoring accuracy.

- Final Review and Documentation: After applying the techniques, perform a comprehensive review. Document all changes and methods used for future reference.

Who Typically Uses the Edit and Imputation - vrdc cornell

These sophisticated procedures are primarily utilized by:

- Statistical Agencies: Including national agencies conducting large-scale surveys and censuses.

- Research Institutions: Academic bodies engaged in data-driven studies requiring rigorous data integrity.

- Data Analysts and Scientists: Professionals tasked with ensuring data reliability for strategic decision-making.

Important Terms Related to Edit and Imputation - vrdc cornell

Understanding this domain involves familiarity with key terminology:

- Missing Data: Refers to the absence of data points in a dataset, potentially leading to biased analysis results.

- Multiple Imputation: A statistical technique used to account for the uncertainty associated with missing data by creating multiple datasets and averaging the outcomes.

- Item Nonresponse: This occurs when respondents omit questions, leading to incomplete data collection for specific items.

Key Elements of the Edit and Imputation - vrdc cornell

The cornerstone elements crucial for effective implementation include:

- Formal Models: These provide a structured approach for implementing edits and imputations, ensuring systematic detection and correction of errors.

- Source Data Verification: Ensure the reliability of data from primary sources to minimize error propagation during imputation.

- Integration Across Datasets: Techniques that mend gaps between disparate data sources enhance the comprehensive analysis and usability of merged datasets.

Legal Use of the Edit and Imputation - vrdc cornell

The methodologies are used within a framework that complies with established statistical and ethical guidelines:

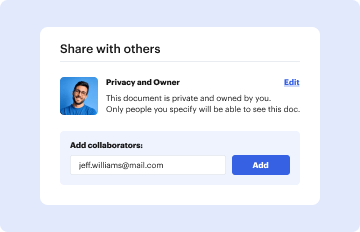

- Data Confidentiality: All procedures must safeguard individual privacy, complying with data protection laws like the GDPR in applicable contexts.

- Ethical Representation: Ensure that imputed data fairly and accurately represent the original populations or phenomena under study.

Software Compatibility

The seamless integration with commonly used data processing software enhances usability:

- Tools like SAS or R are indispensable for performing these complex statistical tasks, supporting a wide range of imputation techniques.

- Compatibility with Excel and SPSS: Ensures the functionality of these processes within popular data analysis ecosystems, allowing for flexibility and user preference.