Definition & Meaning

Post-model analysis in large-scale models, particularly the Global Trade Analysis Project (GTAP) at Purdue University, involves the examination of economic outcomes following the execution of primary economic models. These analyses aim to refine model outputs, offering insights into economic policies and international trade impacts. The post-model phase integrates tools for visualizing results, assessing economic scenarios, and tailoring findings to specific research questions.

Examples of Post-model Analysis Use

- Evaluating Trade Policies: Researchers use GTAP post-model analysis to evaluate the consequences of trade agreements, determining their effects on GDP, trade flows, and employment.

- Assessing Environmental Regulations: The tool helps in assessing the macroeconomic impact of environmental policies, such as carbon taxes, by simulating potential economic shifts.

- Investigating Market Shocks: Analysts deploy post-model analysis to study the repercussions of unexpected market events, like sudden tariff impositions or commodity price changes, facilitating strategic economic decisions.

How to Use the Post-model Analysis in Large-Scale Models

Utilizing post-model analysis within GTAP involves several methodical steps, making it accessible even for those newly acquainted with large-scale economic models.

Steps to Implement

- Model Execution: Begin by running your economic model to generate initial results from various scenarios.

- Import Results: Incorporate your model outputs into GTAP's analytical tools for deeper exploration.

- Visualize Data: Use integrated graphical tools to create visual representations of economic data, aiding in clearer interpretation.

- Filter and Aggregate Results: Utilize filtering mechanisms to concentrate on specific areas of interest, such as particular industries or countries.

- Perform Comparative Analysis: Compare outputs across different scenarios to assess potential outcomes and policy impacts.

Tips for Effective Use

- Familiarize with GTAP Tools: Understanding the interface and capabilities of GTAP tools enhances the depth of your analysis.

- Define Clear Objectives: Clarifying your research goals before analysis leads to more targeted and useful insights.

- Iterative Process: Continuously refine your analysis by revisiting assumptions and exploring various simulation parameters.

Key Elements of the Post-model Analysis

Post-model analysis in GTAP encompasses several critical components vital for comprehensive economic assessment.

Essential Components

- Data Normalization: Standardizing data outputs for consistency across analyses, facilitating comparisons.

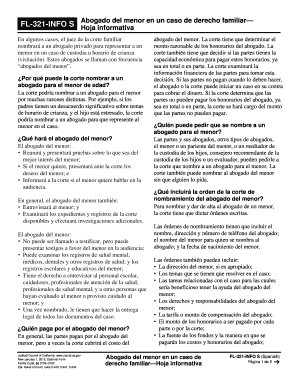

- Interactive Documentation: Access to contextual documentation within GTAP tools, aiding users in understanding functionalities and data interpretations.

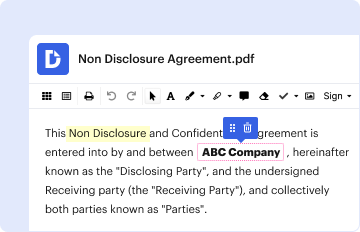

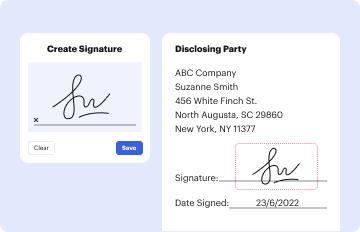

- Graphical User Interface (GUI): An intuitive GUI allows users to interact seamlessly with model outputs, enhancing user experience and analytical efficiency.

Integration with Model Framework

GTAP's post-model analysis stands out by embedding its tools within the modeling framework itself, contrasting with other models that separate code and analysis functions. This integration promotes efficiency in result exploitation and iterative analysis processes, reducing time and resource investments.

Who Typically Uses the Post-Model Analysis in Large-Scale Models

A variety of users leverage post-model analysis in large-scale models, with GTAP serving different sectors and stakeholders.

Common User Groups

- Academic Researchers: Universities and research institutions use GTAP for academic studies and economic forecasting.

- Policy Makers and Government Agencies: These groups utilize post-model analysis to guide decision-making processes and public policy development, particularly concerning trade and environmental regulations.

- Industry Analysts and Consultants: Analysis results aid businesses and consultancies in strategic planning and market assessments, providing insights into economic trends and potential business impacts.

Important Terms Related to Post-Model Analysis

Understanding specific terminology enhances clarity and effectiveness when using post-model analysis tools like GTAP.

Key Terms

- Scenario Analysis: The comparison of different projected economic scenarios to gauge potential outcomes.

- Aggregation Methods: Techniques for summarizing detailed data into broader categories for analysis.

- Sensitivity Analysis: A process that evaluates how changes in certain model inputs affect outcomes, crucial for determining model robustness.

Explanation of Terms

- Normalization: Refers to adjusting data to allow comparisons across different contexts or datasets, essential for consistent result interpretation.

- Interactive Documentation: Embedded guides or help systems within the tool that provide user support and contextual explanations.

How to Obtain the Post-model Analysis in Large-Scale Models - GTAP

To acquire access to GTAP's post-model analysis capabilities, users can follow specific procedures set by Purdue University.

Acquisition Process

- Visit Official GTAP Website: Initial information and contact details are provided on Purdue University’s GTAP portal.

- Complete Registration: Interested parties may need to register for access, potentially involving license agreements or subscription terms.

- Access Training Materials: Purdue offers training programs and materials to ensure effective use of GTAP tools, available through workshops or online resources.

Training and Support

- Workshops and Seminars: Offered regularly to update users on model developments and advanced analytical techniques.

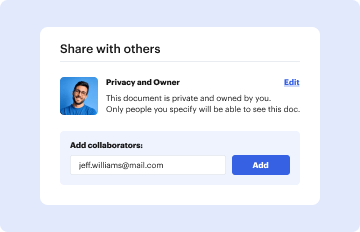

- User Community and Forums: A supportive community allowing users to share insights, seek advice, and collaborate on economic research projects.

Legal Use and Compliance

Adhering to legal standards is critical when employing GTAP's post-model analysis tools for research and policy formulation.

Legal Considerations

- Intellectual Property: Respect licensing agreements and terms of use associated with GTAP software and data.

- Data Confidentiality: Ensure compliance with data protection regulations, particularly when using private or sensitive datasets in analyses.

- Ethical Research Practices: Maintain integrity in research practices, ensuring transparency and accuracy in reporting findings.

Compliance Resources

- Purdue University Guidance: Official documentation and guidance from Purdue to navigate legal requirements.

- Industry Standards: Familiarity with relevant industry standards and guidelines for economic modeling and data analysis.