Definition and Purpose of Job Partitioning and Checkpointing - Server11 INFN

Job partitioning and checkpointing is integral to the WP1 Workload Management System for the DataGrid project. It refers to the process of breaking down a large computational task into smaller sub-tasks that can be executed independently across multiple servers. This allows for parallel processing, which significantly improves the efficiency and speed of job execution. Each partitioned job is monitored by a framework that supports job checkpointing, enabling tasks to save their state and resume after interruptions, which is crucial for maintaining progress even when technical issues arise.

Key Objectives

- Enhance computational efficiency by distributing workload across multiple servers.

- Provide resilience against failure through checkpointing.

- Facilitate task management by enabling parallel processing.

How to Use Job Partitioning and Checkpointing

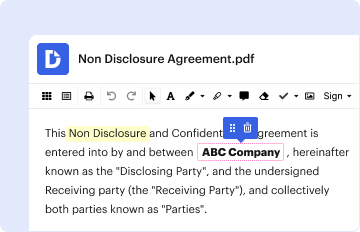

To effectively use job partitioning and checkpointing, it’s essential to understand the framework within which it operates. The Job Description Language (JDL) attributes play a pivotal role in defining how jobs should be partitioned. When setting up tasks, users can specify certain attributes that determine how the partitions are created and managed. Dependencies between tasks can be managed through Directed Acyclic Graphs (DAGs), ensuring that all prerequisite tasks are completed before a specific task begins.

Step-by-Step Process

- Define Tasks: Use JDL attributes to set up tasks and their requirements.

- Setup Dependencies: Employ DAGs to structure task dependencies.

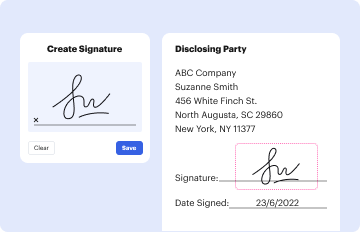

- Implement Checkpointing: Ensure tasks save their state to allow resumption post-interruption.

Importance of Job Partitioning and Checkpointing

Implementing job partitioning and checkpointing offers significant advantages, particularly for projects that require high computational power and efficiency. By decomposing tasks into smaller parts, it’s easier to manage resources, increase fault tolerance, and ensure continuous progress in the event of a failure.

Benefits

- Increased speed and efficiency in processing large tasks.

- Improved resource management that maximizes server utilization.

- Enhanced fault tolerance through systematic checkpointing.

Key Elements and Terminology

Understanding the terminology used in job partitioning and checkpointing is crucial for effective implementation. Some important terms include:

- Partitionable Jobs: Tasks that can be divided into smaller units for better processing.

- Checkpointing: The process of saving the state of a job to enable resumption after interruptions.

- Directed Acyclic Graphs (DAGs): Structures that manage task dependencies, ensuring logical and sequential task execution.

Legal and Compliance Considerations

In any workload management system, ensuring legal compliance with relevant data protection and processing standards is vital. It’s important to understand the legal frameworks governing data management within your jurisdiction, particularly if the system is deployed across various geographic locations.

Compliance Checks

- Adhere to data protection regulations, especially when using checkpointing for sensitive information.

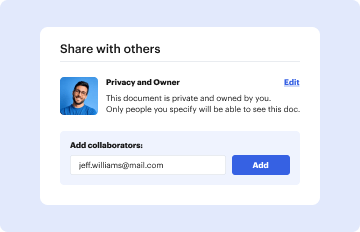

- Ensure transparency in job management and execution processes.

Examples and Case Studies

To underscore the practical application of job partitioning and checkpointing, consider real-world scenarios such as large-scale data analysis in academic research or financial modeling. These processes benefit greatly from the parallel execution and fault tolerance offered by partitioning and checkpointing.

Real-World Implications

- Academic Research: Allows for the processing of large datasets with increased efficiency.

- Financial Modeling: Offers robust error handling, ensuring that financial computations are not lost due to failures.

Integration and Compatibility

Job partitioning and checkpointing can be integrated across different software and platforms, with a focus on compatibility with existing systems. This includes integration into cloud-based environments for enhanced scalability and flexibility.

Software and Platforms

- Seamless integration with cloud storage solutions such as Google Drive.

- Compatibility with various cloud platforms designed for high-performance computing.

Future Enhancements and Variations

The technology behind job partitioning and checkpointing is continually evolving. Organizations should be aware of updates and new variations that might offer improved performance or additional features.

Emerging Trends

- Advanced algorithms for better task partitioning.

- Enhanced checkpointing mechanisms that support more complex job scenarios.

Understanding these aspects of job partitioning and checkpointing equips organizations with the tools needed to optimize their computational workflows efficiently and effectively.