Whether you are already used to dealing with TXT or handling this format for the first time, editing it should not feel like a challenge. Different formats may require particular applications to open and modify them effectively. Yet, if you need to quickly set pattern in TXT as a part of your typical process, it is advisable to find a document multitool that allows for all types of such operations without the need of extra effort.

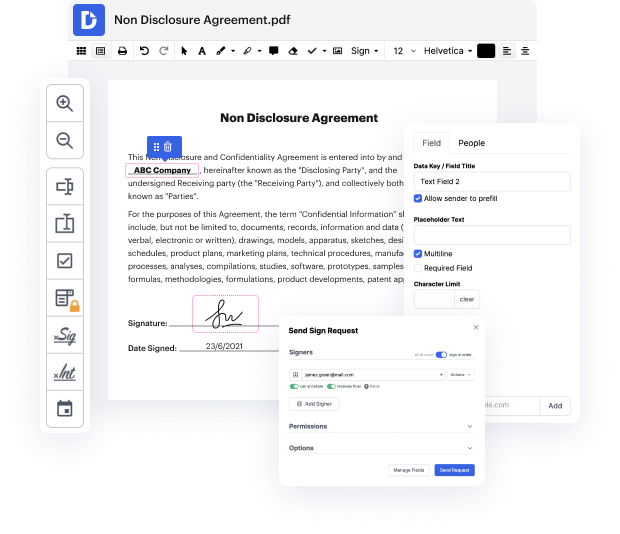

Try DocHub for sleek editing of TXT and other document formats. Our platform provides effortless papers processing regardless of how much or little previous experience you have. With all instruments you have to work in any format, you won’t need to jump between editing windows when working with each of your papers. Easily create, edit, annotate and share your documents to save time on minor editing tasks. You will just need to sign up a new DocHub account, and you can begin your work right away.

See an improvement in document processing efficiency with DocHub’s simple feature set. Edit any document quickly and easily, irrespective of its format. Enjoy all the benefits that come from our platform’s efficiency and convenience.

Darren Taylor: In this video, we are going to discuss a small but quite powerful file within your website, known as the robots. txt file. Now, its an important file when it comes to technical SEO, and were going to explore what the file does, how it works, and the implications it has on your SEO. Coming up. [music] Hey there, guys. Darren Taylor of thebigmarketer.co.uk here, and my job is to teach you all about search engine marketing. If thats up your street, you should consider subscribing to my channel. Today, we are talking about the robots. txt file, which is a small file held on all websites, that instruct Google and other crawlers how to handle the URLs and sections of your website. First of all, what is the robots. txt file and where can you find it? Well, if you go to pretty much all websites out there -- you can try this now if you like -- and go to the base URL, and then do a forward slash, and then type in robots. txt, youll be taken to a plain text page showing a few

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more