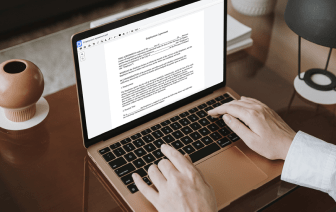

If you edit documents in different formats day-to-day, the universality of the document tools matters a lot. If your instruments work with only a few of the popular formats, you may find yourself switching between software windows to embed text in ABW and manage other document formats. If you want to get rid of the hassle of document editing, get a platform that can effortlessly manage any extension.

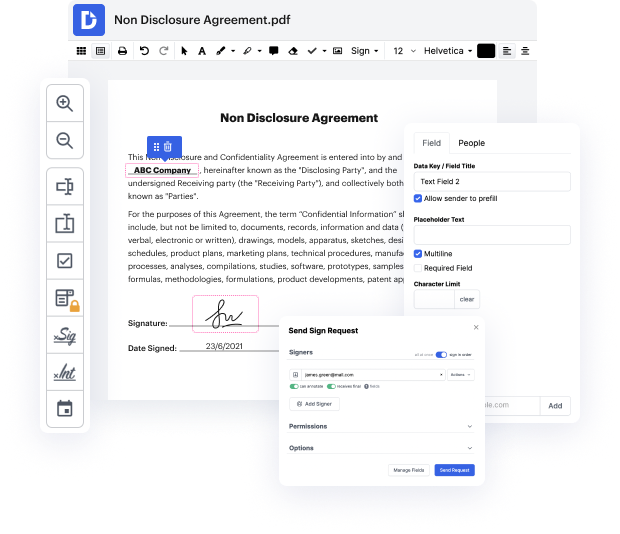

With DocHub, you do not need to concentrate on anything short of the actual document editing. You will not need to juggle applications to work with diverse formats. It can help you modify your ABW as effortlessly as any other extension. Create ABW documents, edit, and share them in a single online editing platform that saves you time and boosts your efficiency. All you have to do is sign up a free account at DocHub, which takes only a few minutes.

You will not need to become an editing multitasker with DocHub. Its feature set is sufficient for speedy document editing, regardless of the format you want to revise. Start by registering a free account to see how easy document management can be having a tool designed particularly to meet your needs.

In this NLP playlist we have covered the text representation techniques from label encoding to TF-IDF Today we are going to talk about word embeddings. There are certain limitations of Bag of words and TF-IDF which we have discussed in previous videos, which is the vector size can really be big for bag of words and TF-IDF model. And it may consume lot of compute resources, memory and so on. Lets say you have vocabulary of 200,000 words or 100,000 words each vector for each of the documents would be 100 000 size and that that may be too much and the presentation is sparse meaning in that vector most of the values are 0. So it is not a very efficient presentation. The other problem we saw was that lets say you have 2 words I need help, I need assistance these are similar sentences. You expect that their vector representation should be similar, but since these are TF-IDF and bag of words are count based methods, the vector representation might not be similar. Here you can see see there