Definition & Meaning

The "A Fast Counting Data Compression Algorithm" refers to a class of algorithms designed to speed up data compression by using counting methods, which are computational techniques that improve both compression efficiency and processing speed. Unlike traditional statistical compression methods, counting techniques prioritize quick data processing, which is particularly beneficial in scenarios requiring fast compression and decompression. These algorithms are designed to deliver compression ratios similar to advanced block-sorting methods while offering additional functionalities, including the ability to efficiently merge compressed files.

Key Elements of the A Fast Counting Data Compression Algorithm

The core components of the fast counting data compression algorithm include several critical elements that ensure high performance and efficiency:

- Fast Searching Capabilities: The algorithm's structure allows for efficient searching within compressed files, eliminating the need for decompression before conducting searches.

- Improved Compression Efficiency: The method focuses on optimizing both the speed and the ratio of compression, enabling users to store data more efficiently.

- Merging of Compressed Files: One of the significant benefits is the ability to merge compressed files without decompression, which helps maintain the integrity and speed of data management processes.

How to Use the A Fast Counting Data Compression Algorithm

Utilizing the fast counting data compression algorithm involves a series of strategic steps designed to maximize the benefits of this technology:

- Data Preparation: Ensure that your data is organized and ready for compression. This involves cleaning and formatting the data to match the algorithm's requirements.

- Algorithm Selection: Choose the appropriate version of the fast counting algorithm based on your data size and required processing speed.

- Compression Execution: Implement the algorithm to compress the data. During this phase, you should monitor performance metrics to confirm efficiency.

- Testing and Validation: After compression, conduct tests to verify that the data integrity is maintained and that the compression ratios meet your expectations.

Who Typically Uses the A Fast Counting Data Compression Algorithm

This algorithm is widely utilized by professionals and institutions that handle large volumes of data and require rapid data processing:

- Tech Companies: Organizations in software development or IT that prioritize speed and efficiency in data handling.

- Research Institutions: Universities and research facilities that manage large datasets for computational studies.

- Corporate Enterprises: Businesses that need robust data management solutions to handle customer information and operational data efficiently.

Examples of Using the A Fast Counting Data Compression Algorithm

Practical application examples demonstrate the versatility of the fast counting algorithm in diverse settings:

- Database Management: Companies using large databases can apply the algorithm to compress logs and transaction data, improving storage efficiency.

- Real-Time Data Processing: Businesses that require instant data processing, such as stock trading platforms, benefit from the algorithm's rapid compression and decompression capabilities.

- Scientific Computation: Researchers processing complex and sizeable datasets for simulations or analysis can enhance data management through efficient compression.

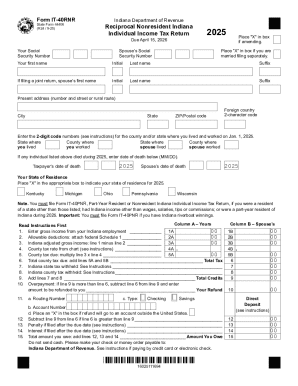

Software Compatibility (TurboTax, QuickBooks, etc.)

The fast counting data compression algorithm is compatible with various software platforms, facilitating seamless integration into existing systems:

- Business Software: Tools like QuickBooks that handle extensive financial records can leverage the algorithm for compressing financial data logs.

- Tax Software: While primarily beneficial for large dataset compression, its integration into tax software such as TurboTax for compressing archival data can streamline data handling processes.

Versions or Alternatives to the A Fast Counting Data Compression Algorithm

While the fast counting data compression algorithm is efficient, there are alternative methods and versions tailored to specific needs:

- Block Sorting Algorithms: Provide comparative compression ratios with different computational demands.

- Huffman Coding Enhancements: Use a similar foundational principle with adjustments for specific data types or processing speeds.

Legal Use of the A Fast Counting Data Compression Algorithm

Adhering to legal guidelines when using data compression algorithms is essential:

- Ensure compliance with data protection laws, such as the GDPR or CCPA, especially when compressing sensitive or personal data.

- Organizations must ensure that any data shared post-compression meets regulatory requirements for data integrity and security, maintaining the confidentiality and privacy expectations outlined by governing bodies.