Definition & Meaning

The "Customizable k-Anonymity Model for Protecting Location Privacy" is a privacy-preserving approach used to safeguard the location data of individuals utilizing location-based services (LBSs). This model employs a k-anonymity framework, ensuring that any single user's location is indistinguishable from at least k-1 other users, thus providing anonymity and protecting against privacy breaches. The model emphasizes user control, allowing individuals to determine their desired level of anonymity and acceptable spatial and temporal tolerances. Essentially, it provides a method to anonymize real-time location data without compromising the quality and usability of the services.

How to Use the Model

To effectively employ the customizable k-anonymity model, individuals and organizations must understand its core components and functionalities. Users specify their anonymity requirements and any spatial or temporal inaccuracies they can tolerate. The model uses a spatio-temporal cloaking algorithm, like CliqueCloak, to generalize location data, thus preserving user privacy. This involves the following steps:

- Specify Anonymity Level: Users define how many people (k) they want to be anonymous among.

- Determine Tolerances: Set acceptable ranges for location inaccuracy in both time and space, ensuring flexibility in data use.

- Implement Cloaking Algorithm: Use software that supports the spatio-temporal cloaking algorithm to process location data.

- Monitor Performance: Evaluate the system's effectiveness in maintaining privacy while assessing any potential impact on service performance.

Steps to Complete the Model Implementation

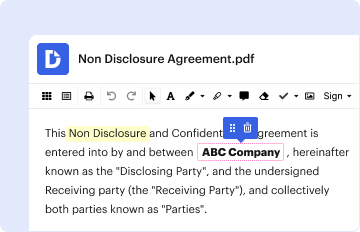

- Initial Setup: Install and configure the necessary software or platform that supports the k-anonymity model.

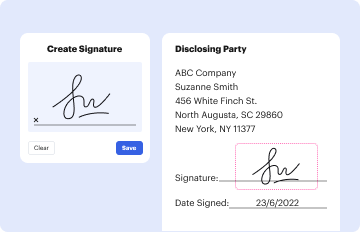

- User Configuration: Allow users to set their levels of anonymity and inaccuracy tolerances through an intuitive interface.

- Data Processing: Implement the CliqueCloak algorithm to process and cloak user location data in real-time.

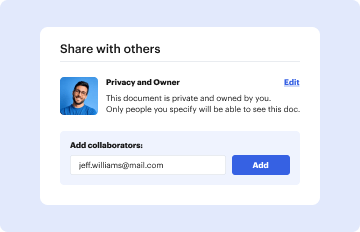

- Integration with Services: Ensure compatibility and integration with existing LBSs for seamless data exchange.

- Testing: Regularly test the system to measure the balance between privacy and service performance.

- Feedback Mechanism: Collect user feedback to continually refine and adjust the system for better performance and privacy protection.

Key Elements of the Model

- Anonymity Level (k): The critical parameter indicating how many users share the same location data to maintain privacy.

- Spatio-Temporal Cloaking: The technique used for obfuscating user location data to match the specified anonymity requirements.

- User Customization: Allows individualized settings for anonymity and accuracy, enhancing user control over their data privacy.

- Real-Time Processing: The capability to anonymize location data as it is generated, ensuring immediate privacy protection.

- Compatibility with LBSs: Ensures that privacy measures do not hinder the functionality of location-based services that rely on accurate data.

Who Typically Uses the Model

The customizable k-anonymity model is primarily utilized by individuals and organizations engaged in safeguarding user privacy in digital environments. Common users include:

- Mobile Application Developers: Integrate the model to protect users' location data without diminishing app functionality.

- Geolocation Service Providers: Employ the model to enhance customer trust by ensuring their location data remains confidential.

- Research Institutions: Use the model for studies requiring anonymized geolocation data, ensuring compliance with privacy laws.

- Public Sector Organizations: Government bodies utilizing location data for urban planning or emergency services while maintaining citizen privacy.

Legal Use and Compliance

Employing the customizable k-anonymity model aligns with numerous privacy regulations, like the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA). Organizations must ensure that:

- Data Processing: Complies with legal standards for anonymization and data protection.

- User Consent: Obtain explicit consent from users regarding the processing and anonymization of their location data.

- Transparency: Maintain clear communication with users on how their data is used and anonymized.

- Auditing and Monitoring: Regularly audit processes to ensure compliance with evolving data protection laws and policies.

Why Use the Model

The use of a customizable k-anonymity model is driven by the increasing need for privacy in digital environments. Key benefits include:

- Enhanced User Privacy: Providing individuals with confidence that their location data is protected.

- Compliance with Regulations: Ensures adherence to legal standards on data protection.

- Increased Trust: Builds user trust in technology by prioritizing their privacy.

- Flexible and Scalable: Suited for various applications, allowing organizations to tailor privacy solutions to their specific needs.

Examples of Using the Model

- Healthcare Apps: Protect patient location data while allowing access to health services based on geographical preferences.

- Ride-Sharing Applications: Anonymize rider locations to prevent tracking while enabling efficient ride matching.

- Social Networking Platforms: Use anonymized location data to provide location-based services without exposing user identities.

- Retail Apps: Offer personalized shopping experiences by utilizing anonymized location data to recommend nearby products or stores.

Important Terms Related to the Model

- k-Anonymity: A property of a data anonymization process that guarantees that each individual's data is indistinguishable from at least k-1 other individuals.

- Spatio-Temporal Data: Information that relates to geographic locations and time, often used in LBSs.

- CliqueCloak Algorithm: A specific spatio-temporal cloaking algorithm designed to implement the k-anonymity model for location privacy.

- User Tolerances: The acceptable levels of data inaccuracy as defined by users, allowing some flexibility to maintain privacy.

- Location-Based Services (LBSs): Services that utilize geographic data of users to provide contextual information or functionality.