When your day-to-day tasks scope includes plenty of document editing, you know that every document format requires its own approach and in some cases specific applications. Handling a seemingly simple TXT file can sometimes grind the whole process to a halt, especially when you are trying to edit with insufficient tools. To prevent this sort of difficulties, find an editor that can cover your requirements regardless of the file format and void URL in TXT with zero roadblocks.

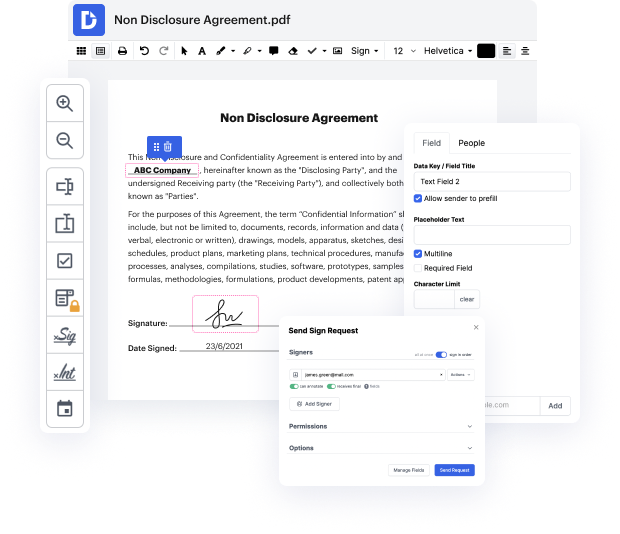

With DocHub, you will work with an editing multitool for virtually any occasion or document type. Minimize the time you used to invest in navigating your old software’s functionality and learn from our intuitive interface while you do the job. DocHub is a streamlined online editing platform that handles all of your document processing requirements for any file, such as TXT. Open it and go straight to productivity; no previous training or reading guides is needed to reap the benefits DocHub brings to papers management processing. Start with taking a few moments to register your account now.

See upgrades within your papers processing right after you open your DocHub account. Save time on editing with our single solution that will help you be more productive with any document format with which you need to work.

CUTTS: Okay. I wanted to talk to you today about robots.txt. One complaint that we often hear is, I blocked Google from crawling this page and robots.txt and you clearly violated that robots.txt by crawling that page because its showing up in Google search results. A very common complaint, and so, heres how you can debug that. Weve had the same robots.txt handling for years and years and years. And we havent found any bugs in it for several years, and so, most of the time, whats happening is this. When someones saying, I blocked example.com/go in robots.txt, it turns out that the snippets that we return in the search results looks like this. And youll notice, unlike most search results, theres not some text here. Well the reason is that we didnt really crawl this page. We did abide by robots.txt. You told us this page is blocked so we did not fetch this page. Instead, this is an uncrawled URL. Its a URL reference. We saw a link to it, but we didnt fetch the page itse

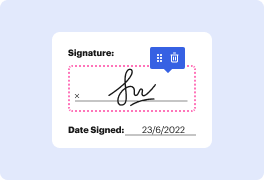

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more