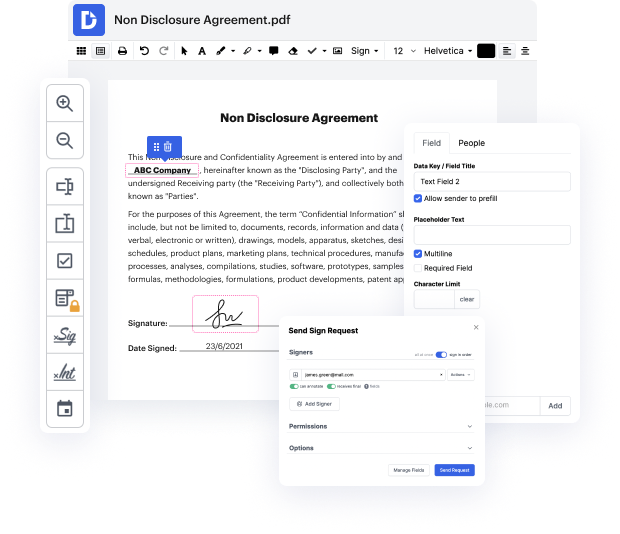

Searching for a specialized tool that deals with particular formats can be time-consuming. Regardless of the huge number of online editors available, not all of them support TXT format, and definitely not all allow you to make adjustments to your files. To make matters worse, not all of them give you the security you need to protect your devices and documentation. DocHub is a perfect answer to these challenges.

DocHub is a well-known online solution that covers all of your document editing needs and safeguards your work with enterprise-level data protection. It supports different formats, including TXT, and helps you modify such documents easily and quickly with a rich and user-friendly interface. Our tool complies with important security regulations, like GDPR, CCPA, PCI DSS, and Google Security Assessment, and keeps improving its compliance to guarantee the best user experience. With everything it offers, DocHub is the most reputable way to Void issue in TXT file and manage all of your individual and business documentation, no matter how sensitive it is.

As soon as you complete all of your modifications, you can set a password on your edited TXT to ensure that only authorized recipients can open it. You can also save your paperwork with a detailed Audit Trail to find out who applied what edits and at what time. Opt for DocHub for any documentation that you need to adjust safely and securely. Sign up now!

how to fix blocked by robots.txt in the page indexing reports in the latest google search console in this video session im going to show you how to test troubleshoot this particular issue that your website may be having with google when youre looking in page indexing report you simply press on blocked by robots.txt then google shows you some of the urls here the first line of action obviously is press on all submitted pages in my scenario i have no issues whatsoever for the submitted pages submitted pages come from your sitemaps that means this is the map of your website youre telling google to when you use xml sitemaps so now lets go back all known pages so that i can create this tutorial for you in my scenario blocked by robots.txt error reporting is giving me some example urls here so lets press on one on the right hand side we have testroybots.txtblocking so lets press on that if youre not able to use this legacy tool then check out rank your youtube channel video session th

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more