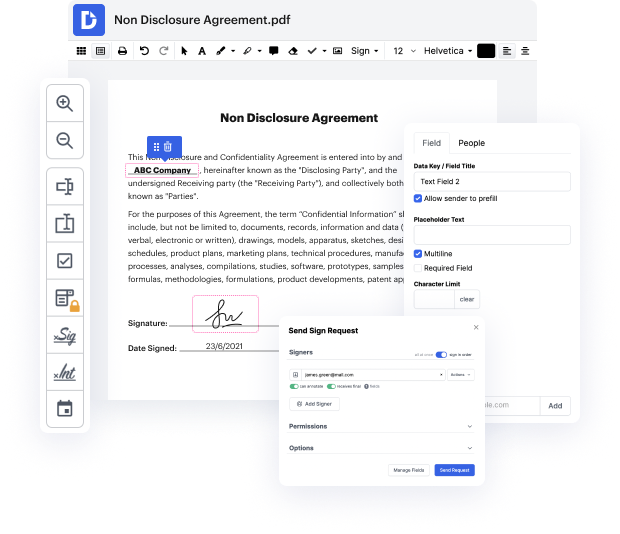

Have you ever had trouble with modifying your Csv document while on the go? Well, DocHub has a great solution for that! Access this cloud editor from any internet-connected device. It enables users to Vary URL in Csv files quickly and anytime needed.

DocHub will surprise you with what it offers. It has powerful functionality to make any changes you want to your paperwork. And its interface is so simple-to-use that the entire process from beginning to end will take you only a few clicks.

As soon as you finish adjusting and sharing, you can save your updated Csv file on your device or to the cloud as it is or with an Audit Trail that contains all alterations applied. Also, you can save your paperwork in its original version or convert it into a multi-use template - accomplish any document management task from anyplace with DocHub. Sign up today!

hello everybody so as you can see today we are going to scrape a list of urls from a csv and my subscriber wants to extract the text from all of the pages from all of the sites so he says all data hes actually after all of the text how can i extract all the pages and sub pages from the list of urls in the csv ive found a method but that is only working for a home page can anyone recode and tell me how to extract full data from a website as we cant use xpath for each url i actually provided him with this originally and um with beautiful soup it worked fine not a problem the issue was was he was wanting the text from all of the pages and not just the first page so what ive done instead is other thing cannot actually come up with another idea so if youd like to see that um this is the list of urls so i dont want to show them too closely to sort of give the game away but um weve got uh 469 urls um ive actually been a bit crafty and used vim to strip of

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more