Today’s document editing market is enormous, so locating a suitable solution meeting your needs and your price-quality expectations can take time and effort. There’s no need to waste time browsing the web looking for a versatile yet easy-to-use editor to Tack URL in Csv file. DocHub is here at your disposal whenever you need it.

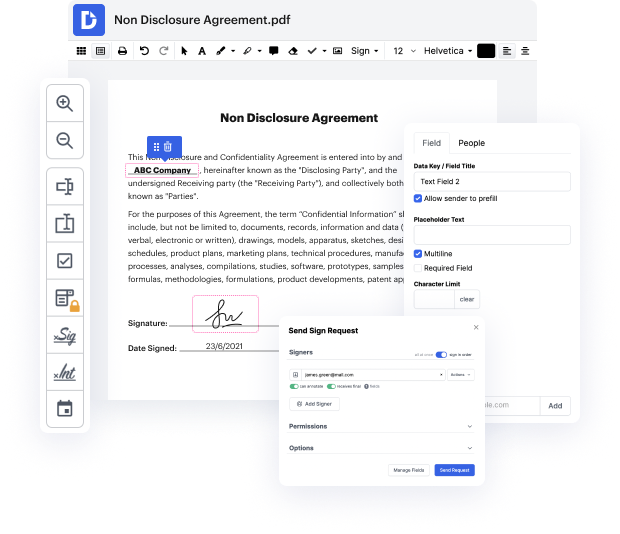

DocHub is a globally-known online document editor trusted by millions. It can fulfill almost any user’s demand and meets all required security and compliance certifications to guarantee your data is well protected while changing your Csv file. Considering its rich and intuitive interface offered at an affordable price, DocHub is one of the most beneficial choices out there for optimized document management.

DocHub provides many other capabilities for effective document editing. For instance, you can convert your form into a multi-use template after editing or create a template from scratch. Explore all of DocHub’s features now!

hello everybody so as you can see today we are going to scrape a list of urls from a csv and my subscriber wants to extract the text from all of the pages from all of the sites so he says all data hes actually after all of the text how can i extract all the pages and sub pages from the list of urls in the csv ive found a method but that is only working for a home page can anyone recode and tell me how to extract full data from a website as we cant use xpath for each url i actually provided him with this originally and um with beautiful soup it worked fine not a problem the issue was was he was wanting the text from all of the pages and not just the first page so what ive done instead is other thing cannot actually come up with another idea so if youd like to see that um this is the list of urls so i dont want to show them too closely to sort of give the game away but um weve got uh 469 urls um ive actually been a bit crafty and used vim to strip of

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more