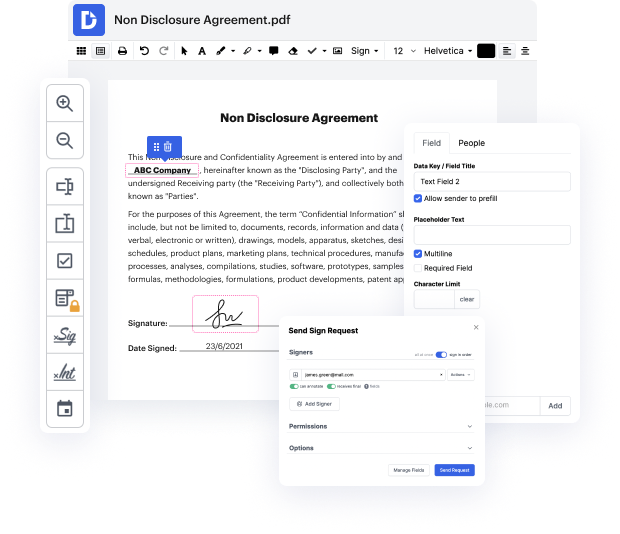

There are so many document editing solutions on the market, but only a few are compatible with all file types. Some tools are, on the contrary, versatile yet burdensome to use. DocHub provides the solution to these issues with its cloud-based editor. It offers rich capabilities that enable you to complete your document management tasks efficiently. If you need to promptly Revise token in Text, DocHub is the best choice for you!

Our process is very easy: you upload your Text file to our editor → it instantly transforms it to an editable format → you apply all required adjustments and professionally update it. You only need a couple of moments to get your paperwork done.

When all alterations are applied, you can transform your paperwork into a multi-usable template. You just need to go to our editor’s left-side Menu and click on Actions → Convert to Template. You’ll find your paperwork stored in a separate folder in your Dashboard, saving you time the next time you need the same template. Try DocHub today!

when were building nlp systems the input is not words or even sentences but rather just sequences of characters take this example from pride and prejudice if we were to just split this by spaces we would get this word sequence where we have three instances of i that differ because punctuation is still attached so we perform ization which converts a sequence of characters into a sequence of s when using a standard izer in this text we get this sequence which has separated punctuation from words and also split the contraction im into i and apostrophe m so now our three instances of i look the same most izers are rule-based manually designed by speakers of a language but there are different ization conventions one difference in english is how contractions are handled for example heres how two ization conventions look for a few english contractions neither seems perfect dont and arent are maybe better handled by the pantry bank convention because the words do and are are separate wor

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more