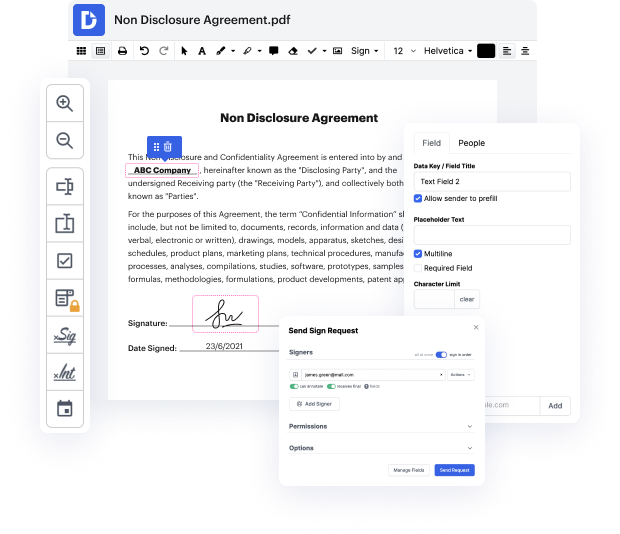

Searching for a specialized tool that handles particular formats can be time-consuming. Regardless of the huge number of online editors available, not all of them support Tiff format, and definitely not all allow you to make changes to your files. To make things worse, not all of them provide the security you need to protect your devices and paperwork. DocHub is a perfect answer to these challenges.

DocHub is a popular online solution that covers all of your document editing requirements and safeguards your work with bank-level data protection. It supports various formats, such as Tiff, and enables you to modify such documents quickly and easily with a rich and user-friendly interface. Our tool complies with essential security certifications, such as GDPR, CCPA, PCI DSS, and Google Security Assessment, and keeps improving its compliance to provide the best user experience. With everything it offers, DocHub is the most trustworthy way to Revise cross in Tiff file and manage all of your personal and business paperwork, regardless of how sensitive it is.

After you complete all of your adjustments, you can set a password on your updated Tiff to make sure that only authorized recipients can open it. You can also save your paperwork with a detailed Audit Trail to check who made what edits and at what time. Choose DocHub for any paperwork that you need to edit safely and securely. Subscribe now!

if you made a neural network on the surface of mars youd be a long long way away from me thats the truth statquest hello im josh starmer and welcome to statquest today were going to talk about neural networks part 6 cross-entropy note this stat quest assumes that you already understand the main ideas behind neural networks the main ideas behind back propagation and softmax and argmax if not check out the quests the links are in the description below now before we get started with cross entropy let me remind you that in the statquest on backpropagation main ideas we had a simple neural network with a single output that in theory could give us any output value in cases like this we commonly use the sum of the squared residuals to determine how well the neural network fits the data bam now when we have a neural network with multiple output values we often run the data through arg max to make the output easy to interpret but because arg max has a terrible derivative we cant use it for

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more