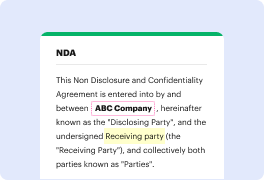

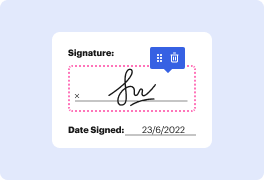

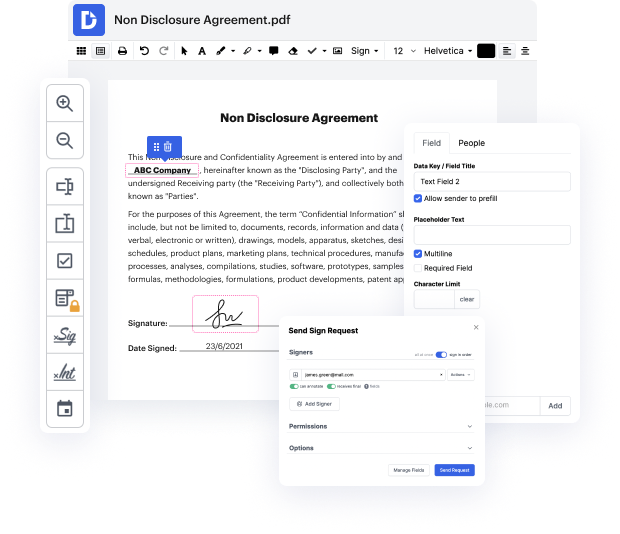

DocHub is a powerful online platform designed to streamline document editing, signing, and distribution. With its intuitive interface, users can easily manage their PDFs for free. The integration with Google Workspace allows seamless importing and exporting of documents, ensuring a smooth workflow for various business processes. In this guide, we will walk you through the steps to reedit data in a PDF on your desktop, making the process efficient and user-friendly.

Start reediting your PDFs today with DocHub and experience the convenience of efficient document management!

Reddit is facing challenges with its public API being monetized, causing many subreddits to go private. Despite this, Reddit remains important for AI training models, data collection, and market insights. To scrape Reddit in 2023, follow guidelines such as checking robots.txt, complying with GDPR, and avoiding copyrighted material. Be cautious of scraping rate limits to avoid disrupting website functionality.

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more