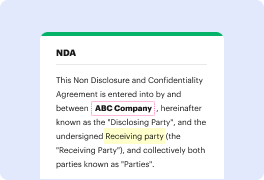

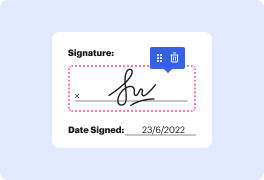

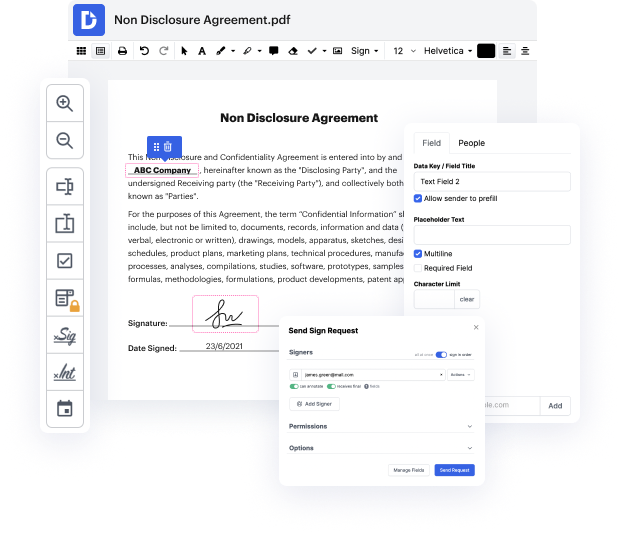

DocHub is an innovative platform that simplifies the process of document management, providing users with powerful features for editing, signing, and sharing documents online for free. With seamless integration with Google Workspace, our editor allows easy import, export, and modification of documents directly from Google apps, ensuring efficient workflows and hassle-free business processes.

Start using our platform today to enhance your document editing experience!

Reddit has faced challenges recently with its public API being monetized and many subreddits going private. However, it remains a crucial platform for AI training and research. When scraping Reddit in 2023, it is important to follow guidelines, such as checking the robots.txt file and complying with GDPR. Avoid collecting copyrighted material and do not use data for commercial purposes. Be mindful of scraping rate limits to avoid disrupting the website's function.

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more