When your day-to-day work includes lots of document editing, you know that every document format requires its own approach and sometimes particular software. Handling a seemingly simple excel file can sometimes grind the entire process to a halt, especially if you are attempting to edit with inadequate software. To prevent this sort of difficulties, get an editor that can cover your needs regardless of the file format and omit information in excel with zero roadblocks.

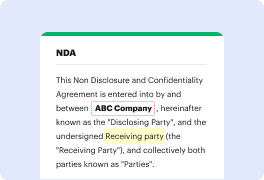

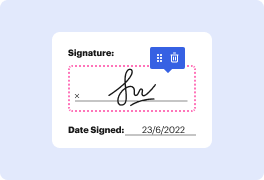

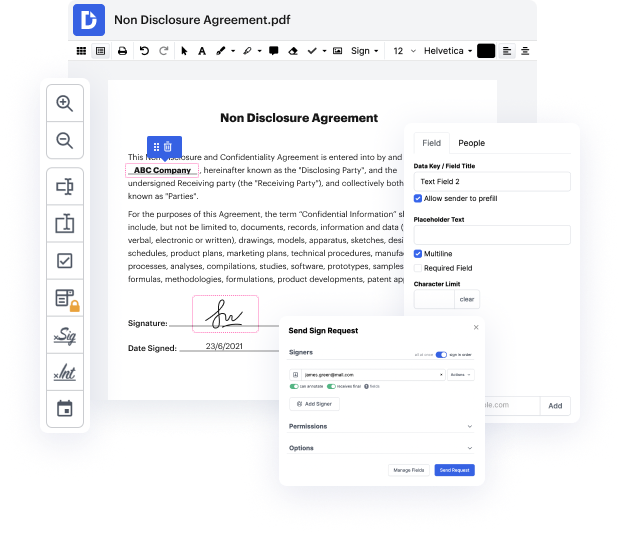

With DocHub, you will work with an editing multitool for any situation or document type. Minimize the time you used to devote to navigating your old software’s functionality and learn from our intuitive user interface as you do the work. DocHub is a sleek online editing platform that handles all of your document processing needs for any file, such as excel. Open it and go straight to productivity; no prior training or reading guides is needed to enjoy the benefits DocHub brings to papers management processing. Begin with taking a few moments to create your account now.

See upgrades within your papers processing right after you open your DocHub profile. Save time on editing with our one platform that will help you become more efficient with any document format with which you have to work.

Today were going to take a look at a very common task when it comes to cleaning data and its also a very common interview question that you might get if youre applying for a data or financial analyst type of job. How can you remove duplicates in your data? Im going to show you three methods, its important that you understand the advantages and disadvantages of the different methods and why one of these methods might return a different result to the other ones. Lets take a look Okay, so I have this table with sales agent region and sales value I want to remove the duplicates that occur in this table but first of all what are the duplicates? well if we take a look at this row for example and take a look at this one, is this a duplicate? no right? because the sales value is different, but what about this one and this one? These are duplicates. What I want to happen is that every other occurrence of this line is removed. I just keep it once in the end re

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more