Browsing for a professional tool that handles particular formats can be time-consuming. Regardless of the huge number of online editors available, not all of them support Csv format, and definitely not all enable you to make adjustments to your files. To make matters worse, not all of them provide the security you need to protect your devices and documentation. DocHub is an excellent answer to these challenges.

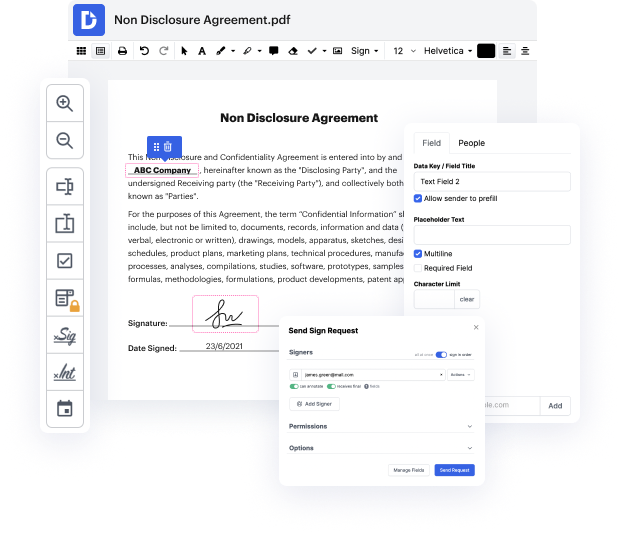

DocHub is a well-known online solution that covers all of your document editing needs and safeguards your work with bank-level data protection. It works with various formats, such as Csv, and allows you to edit such paperwork easily and quickly with a rich and intuitive interface. Our tool meets essential security regulations, such as GDPR, CCPA, PCI DSS, and Google Security Assessment, and keeps enhancing its compliance to provide the best user experience. With everything it provides, DocHub is the most reputable way to Omit checkmark in Csv file and manage all of your personal and business documentation, regardless of how sensitive it is.

After you complete all of your alterations, you can set a password on your edited Csv to ensure that only authorized recipients can work with it. You can also save your paperwork with a detailed Audit Trail to find out who made what edits and at what time. Select DocHub for any documentation that you need to adjust safely. Subscribe now!

Today were going to take a look at a very common task when it comes to cleaning data and its also a very common interview question that you might get if youre applying for a data or financial analyst type of job. How can you remove duplicates in your data? Im going to show you three methods, its important that you understand the advantages and disadvantages of the different methods and why one of these methods might return a different result to the other ones. Lets take a look Okay, so I have this table with sales agent region and sales value I want to remove the duplicates that occur in this table but first of all what are the duplicates? well if we take a look at this row for example and take a look at this one, is this a duplicate? no right? because the sales value is different, but what about this one and this one? These are duplicates. What I want to happen is that every other occurrence of this line is removed. I just keep it once in the end res

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more