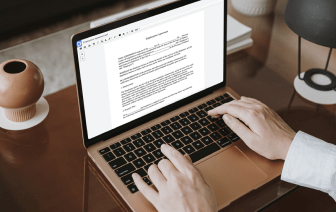

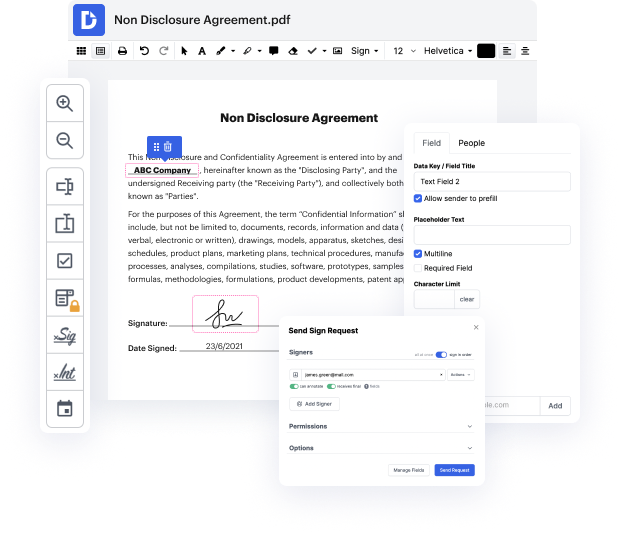

Browsing for a specialized tool that handles particular formats can be time-consuming. Regardless of the vast number of online editors available, not all of them support EZW format, and certainly not all allow you to make changes to your files. To make matters worse, not all of them provide the security you need to protect your devices and paperwork. DocHub is a perfect answer to these challenges.

DocHub is a popular online solution that covers all of your document editing requirements and safeguards your work with bank-level data protection. It works with various formats, including EZW, and helps you modify such documents quickly and easily with a rich and user-friendly interface. Our tool meets important security certifications, like GDPR, CCPA, PCI DSS, and Google Security Assessment, and keeps improving its compliance to guarantee the best user experience. With everything it provides, DocHub is the most reputable way to Negate checkmark in EZW file and manage all of your personal and business paperwork, regardless of how sensitive it is.

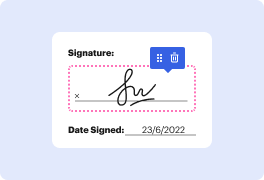

After you complete all of your modifications, you can set a password on your edited EZW to make sure that only authorized recipients can open it. You can also save your paperwork with a detailed Audit Trail to find out who applied what edits and at what time. Choose DocHub for any paperwork that you need to edit securely. Sign up now!

this year we published the massive text embedding benchmarks um which was kind of like a follow-up work which were more put a bit more broadly in perspective so we collected different tasks like where you can use embedding models and you can use them for clustering you can use them for by text mining meaning finding sentence with the same meaning in different languages you can use them for retrieval for a semantic textual similarity for summarization for text classification for pair classification and for re-ranking and so we so so this is like a project that started also really long time ago so when I published the sentence break paper sentence from former papers it should get results on STS and sentiva which was like the defactor standard in embedding evaluation but if you really use it it didnt perform that well and also the original sentence model didnt perform that well as universal sentence encoder even such that The Benchmark on STS showed the opposite and so over the years wi