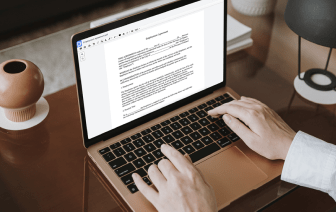

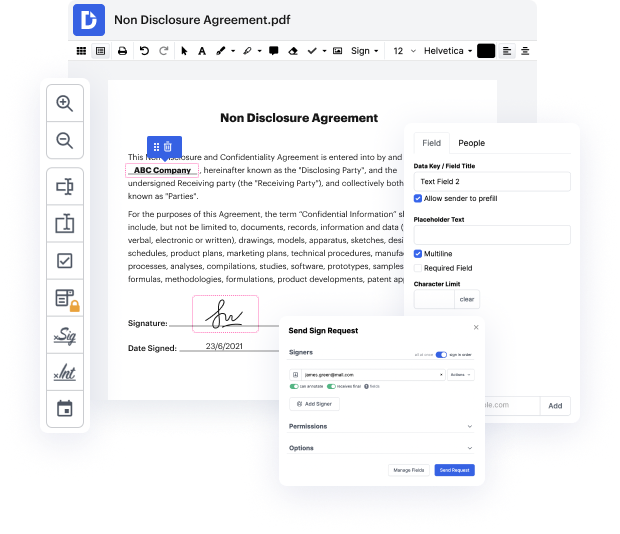

Browsing for a professional tool that deals with particular formats can be time-consuming. Regardless of the huge number of online editors available, not all of them support Docbook format, and certainly not all enable you to make changes to your files. To make things worse, not all of them give you the security you need to protect your devices and paperwork. DocHub is a great answer to these challenges.

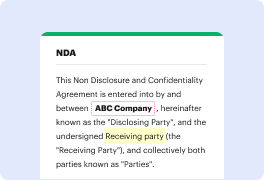

DocHub is a well-known online solution that covers all of your document editing requirements and safeguards your work with bank-level data protection. It works with different formats, such as Docbook, and enables you to modify such paperwork easily and quickly with a rich and intuitive interface. Our tool fulfills crucial security standards, like GDPR, CCPA, PCI DSS, and Google Security Assessment, and keeps enhancing its compliance to provide the best user experience. With everything it provides, DocHub is the most reliable way to Negate arrow in Docbook file and manage all of your individual and business paperwork, no matter how sensitive it is.

Once you complete all of your alterations, you can set a password on your updated Docbook to make sure that only authorized recipients can open it. You can also save your document containing a detailed Audit Trail to see who made what edits and at what time. Select DocHub for any paperwork that you need to edit safely and securely. Sign up now!

im peter higgins and im going to be talking about using the arrow and duct db packages to wrangle bigger than ram medical data sets of over 60 million rows with a bit about data table motivating problem was open payments data from the center of medicaid and medicare services im going to talk about the limits of r and speed in ram the aero package the duct tv package and generally about wrangling very large data and you can see this includes 12 million records per year and a total dollar value of 10.9 billion in 2021. i usually analyze smallish data sets carefully collected and validated data of 500 to 1000 rows maybe 10 000 rows on a big project but digital data which we increasingly have access to from cms or clinical data warehouses can give us much bigger data easily over 100 billion rows often greater than 50 gigabytes which doesnt fit in an email but it also doesnt always work well in r which was designed for data in ram the motivating problem that led me down this rabbit hol