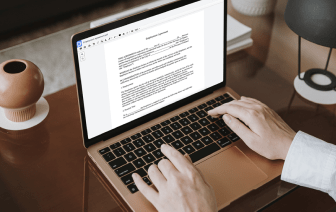

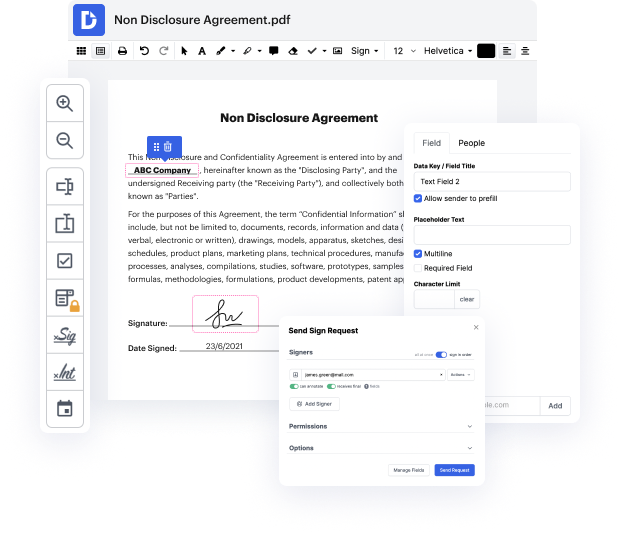

If you edit documents in different formats daily, the universality of your document tools matters a lot. If your tools work for only some of the popular formats, you might find yourself switching between software windows to link writing in LWP and manage other document formats. If you want to get rid of the headache of document editing, go for a platform that will easily handle any extension.

With DocHub, you do not need to focus on anything short of the actual document editing. You won’t need to juggle programs to work with various formats. It will help you revise your LWP as easily as any other extension. Create LWP documents, edit, and share them in one online editing platform that saves you time and improves your productivity. All you have to do is sign up a free account at DocHub, which takes only a few minutes.

You won’t need to become an editing multitasker with DocHub. Its functionality is enough for speedy document editing, regardless of the format you need to revise. Start by registering a free account to see how straightforward document management can be having a tool designed specifically to meet your needs.

in todays short class were going to take a look at some example perl code and were going to write a web crawler using perl this is going to be just a very simple piece of code thats going to go to a website download the raw html iterate through that html and find the urls and retrieve those urls and store them as a file were going to create a series of files and in our initial iteration were going to choose just about 10 or so websites just so that we get to the end and we dont download everything if you want to play along at home you can of course download as many websites as you have disk space for so well choose websites at random and what were going to write is a series of html files numbered 0.html1.html 2.html and so on and then a map file that contains the number and the original url so lets get started with the perl code so were going to write a program called web crawler dot pl heres our web crawler were going to start as weve done before with whats called the