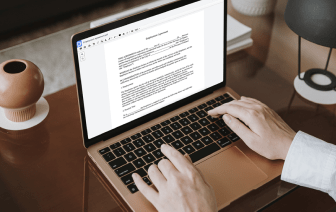

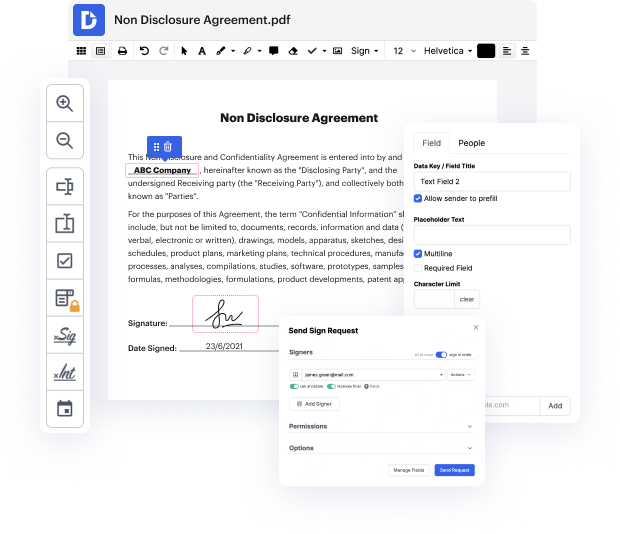

It is usually hard to find a platform that will cover all your organizational needs or gives you suitable instruments to handle document generation and approval. Opting for a software or platform that includes crucial document generation instruments that streamline any process you have in mind is critical. Although the most popular format to work with is PDF, you require a comprehensive software to deal with any available format, including LWP.

DocHub helps to ensure that all your document generation requirements are taken care of. Edit, eSign, turn and merge your pages based on your needs by a mouse click. Work with all formats, including LWP, successfully and . Regardless of the format you begin dealing with, you can easily convert it into a required format. Preserve a lot of time requesting or looking for the correct file format.

With DocHub, you don’t need additional time to get accustomed to our interface and editing process. DocHub is an intuitive and user-friendly software for anyone, even all those without a tech background. Onboard your team and departments and enhance file management for your organization forever. include inscription in LWP, create fillable forms, eSign your documents, and have things completed with DocHub.

Take advantage of DocHub’s extensive function list and swiftly work with any file in any format, such as LWP. Save time cobbling together third-party solutions and stay with an all-in-one software to improve your daily processes. Start your free DocHub trial today.

in todays short class were going to take a look at some example perl code and were going to write a web crawler using perl this is going to be just a very simple piece of code thats going to go to a website download the raw html iterate through that html and find the urls and retrieve those urls and store them as a file were going to create a series of files and in our initial iteration were going to choose just about 10 or so websites just so that we get to the end and we dont download everything if you want to play along at home you can of course download as many websites as you have disk space for so well choose websites at random and what were going to write is a series of html files numbered 0.html1.html 2.html and so on and then a map file that contains the number and the original url so lets get started with the perl code so were going to write a program called web crawler dot pl heres our web crawler were going to start as weve done before with whats called the