Document editing comes as an element of many professions and jobs, which is why instruments for it must be reachable and unambiguous in their use. A sophisticated online editor can spare you a lot of headaches and save a considerable amount of time if you need to Group payment paper.

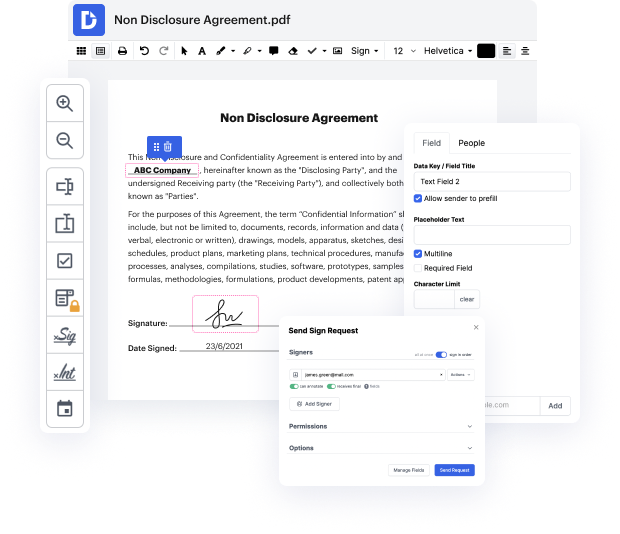

DocHub is an excellent illustration of an instrument you can master in no time with all the valuable features at hand. You can start editing immediately after creating an account. The user-friendly interface of the editor will enable you to locate and make use of any function right away. Experience the difference with the DocHub editor as soon as you open it to Group payment paper.

Being an integral part of workflows, document editing should remain simple. Utilizing DocHub, you can quickly find your way around the editor making the required adjustments to your document without a minute lost.

Hi, Im Mohammad Namvarpour. Today I want to explain the paper Pay Attention to MLPs published by google researchs brain team. This article claims that self-attention blocks used in transformer architecture arent necessary in many applications, and offers the gMLP architecture, which delivers outcomes comparable to transformers without using attention. Transformers have been one of the most important architectural advancements in deep learning in recent years, enabling many breakthroughs. They have revolutionized Natural language processing. And have also been employed in other fields including computer vision. This figure shows the main components of transformer model. If youre unfamiliar with them, I recommend watching my in-depth video on the paper attention is all you need, in which I go through the architecture in great detail.In any case, this architecture is primarily composed of two key components. Self-attention, and feed forward net

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more