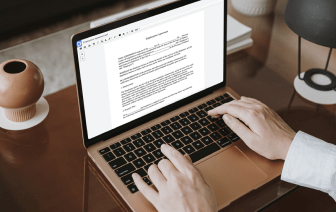

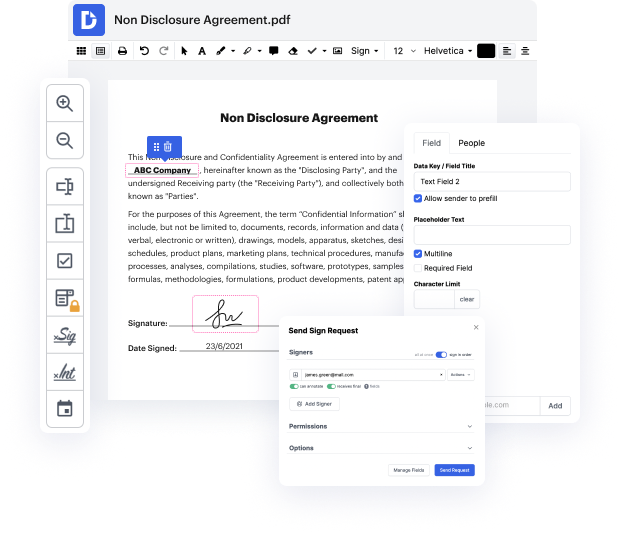

It is often hard to find a platform that will cover all your corporate demands or will provide you with appropriate instruments to handle document creation and approval. Choosing a software or platform that includes important document creation instruments that simplify any process you have in mind is critical. Although the most in-demand file format to use is PDF, you require a comprehensive platform to deal with any available file format, including PAP.

DocHub ensures that all your document creation demands are taken care of. Modify, eSign, rotate and merge your pages in accordance with your needs with a mouse click. Work with all formats, including PAP, successfully and quickly. Regardless of what file format you begin working with, it is possible to convert it into a needed file format. Save tons of time requesting or looking for the right document format.

With DocHub, you don’t require more time to get used to our user interface and editing process. DocHub is undoubtedly an easy-to-use and user-friendly software for everyone, even those without a tech background. Onboard your team and departments and enhance document administration for your business forever. finish token in PAP, make fillable forms, eSign your documents, and have things completed with DocHub.

Take advantage of DocHub’s extensive feature list and swiftly work on any document in any file format, including PAP. Save time cobbling together third-party platforms and stick to an all-in-one software to enhance your everyday processes. Begin your cost-free DocHub trial today.

hello everyone today were taking a look at by t5 towards the token-free future with pre-trained by tobite models majority of existing pre-trained language models typically operate on the subword level meaning that they operate on sequences of tokens representing sub-word units for example if we take a look at a sentence such as in japan cloisonne and amelies are animals are well known as shipoyaki what a typical pre-processing model such as sentence piece will do is its going to split the tokens into sub-word units so some words which are occurring frequently in the data set such as in and japan are going to be represented as a single entity or single single representation whereas more rare words such as close on uh here will be represented in this case with three tokens or three subword units and the model is then trained to process inputs and outputs in such a way using those subword units this has been very effective however has a number of limitations um practically so most nota