Working with documents can be a daunting task. Each format has its peculiarities, which frequently results in confusing workarounds or reliance on unknown software downloads to avoid them. Luckily, there’s a tool that will make this process more enjoyable and less risky.

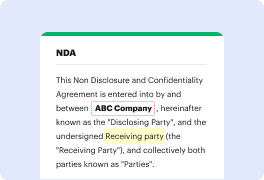

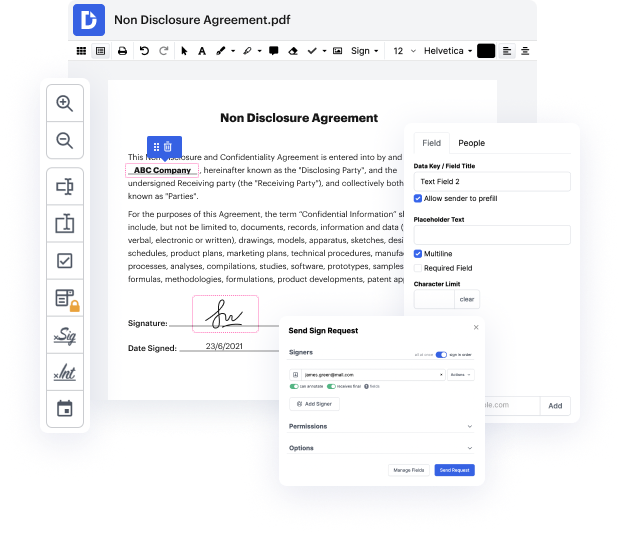

DocHub is a super straightforward yet comprehensive document editing solution. It has various features that help you shave minutes off the editing process, and the ability to Fine-tune Year Text For Free is only a small part of DocHub’s functionality.

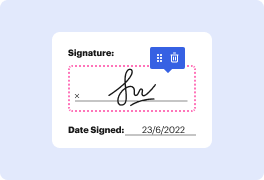

Whether if you need a one-off edit or to edit a huge document, our solution can help you Fine-tune Year Text For Free and make any other desired changes quickly. Editing, annotating, certifying and commenting and collaborating on files is straightforward with DocHub. Our solution is compatible with different file formats - select the one that will make your editing even more frictionless. Try our editor free of charge today!

In today's tutorial, we will be focusing on fine-tuning a model in GPT-3. This video may be longer than usual, but it is necessary as there are potential implications for large language models in the future. To begin fine-tuning, we must create a consistent output that meets our criteria every time, such as maintaining the same format and structure. Having the right data is crucial for fine-tuning, so we will cover how to create the desired output with a good prompt. Let's get started.

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more