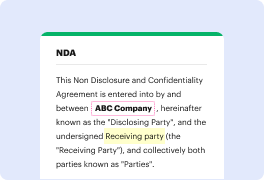

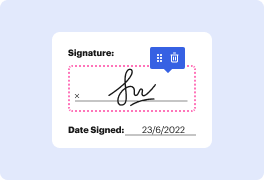

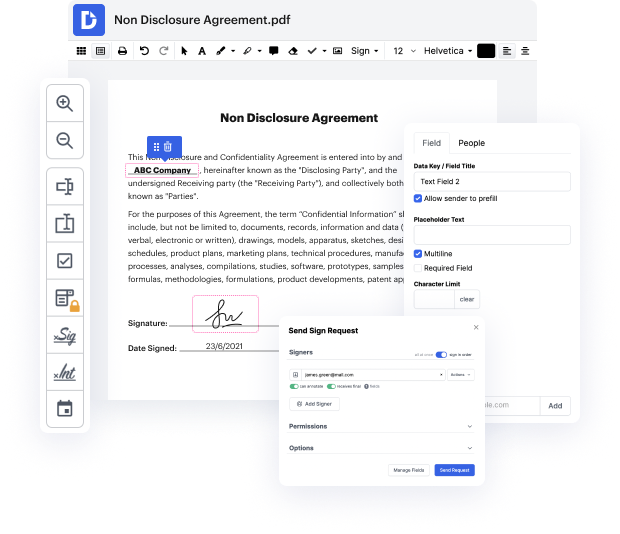

Are you having a hard time finding a reliable option to Fine-tune Subsidize Text For Free? DocHub is made to make this or any other process built around documents more streamlined. It's easy to explore, use, and make changes to the document whenever you need it. You can access the core features for dealing with document-based tasks, like signing, importing text, etc., even with a free plan. In addition, DocHub integrates with multiple Google Workspace apps as well as services, making document exporting and importing a piece of cake.

DocHub makes it easier to work on documents from wherever you’re. In addition, you no longer need to have to print and scan documents back and forth in order to sign them or send them for signature. All the essential features are at your fingertips! Save time and hassle by completing documents in just a few clicks. Don’t hesitate another minute and give DocHub {a try today!

In this video tutorial, Chris McCormick discusses how to apply Bert to document classification. He explains that although Bert can be fine-tuned for sentence classification, there are limitations with the length of input text. To address this issue, a different dataset is needed, such as Wikipedia comments containing personal attacks. The tutorial will cover how to work with this dataset and apply Bert for document classification.

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more