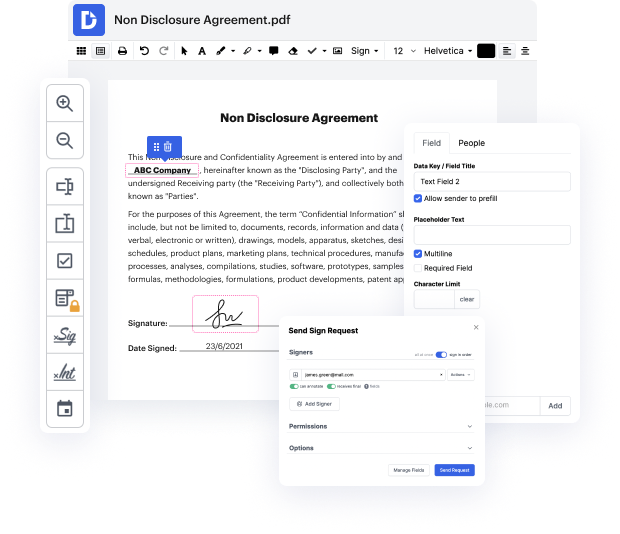

Selecting the best document management solution for the company may be time-consuming. You have to assess all nuances of the software you are considering, evaluate price plans, and stay aware with protection standards. Certainly, the opportunity to deal with all formats, including csv, is vital in considering a solution. DocHub offers an extensive set of functions and instruments to ensure that you deal with tasks of any complexity and handle csv file format. Get a DocHub account, set up your workspace, and begin working on your documents.

DocHub is a extensive all-in-one program that lets you change your documents, eSign them, and make reusable Templates for the most commonly used forms. It offers an intuitive interface and the opportunity to manage your contracts and agreements in csv file format in the simplified way. You do not need to worry about reading countless guides and feeling anxious because the app is too sophisticated. erase URL in csv, delegate fillable fields to chosen recipients and collect signatures quickly. DocHub is about powerful functions for specialists of all backgrounds and needs.

Enhance your document generation and approval operations with DocHub right now. Benefit from all this using a free trial and upgrade your account when you are all set. Edit your documents, make forms, and discover everything that you can do with DocHub.

hello everybody so as you can see today we are going to scrape a list of urls from a csv and my subscriber wants to extract the text from all of the pages from all of the sites so he says all data hes actually after all of the text how can i extract all the pages and sub pages from the list of urls in the csv ive found a method but that is only working for a home page can anyone recode and tell me how to extract full data from a website as we cant use xpath for each url i actually provided him with this originally and um with beautiful soup it worked fine not a problem the issue was was he was wanting the text from all of the pages and not just the first page so what ive done instead is other thing cannot actually come up with another idea so if youd like to see that um this is the list of urls so i dont want to show them too closely to sort of give the game away but um weve got uh 469 urls um ive actually been a bit crafty and used vim to strip o

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more