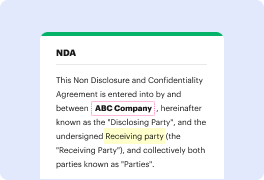

Unusual file formats in your day-to-day document management and modifying operations can create instant confusion over how to edit them. You might need more than pre-installed computer software for effective and quick file modifying. If you need to erase brand in excel or make any other simple alternation in your file, choose a document editor that has the features for you to work with ease. To deal with all the formats, such as excel, opting for an editor that actually works well with all kinds of files is your best choice.

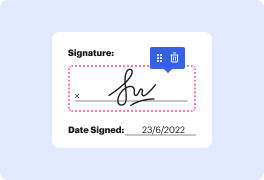

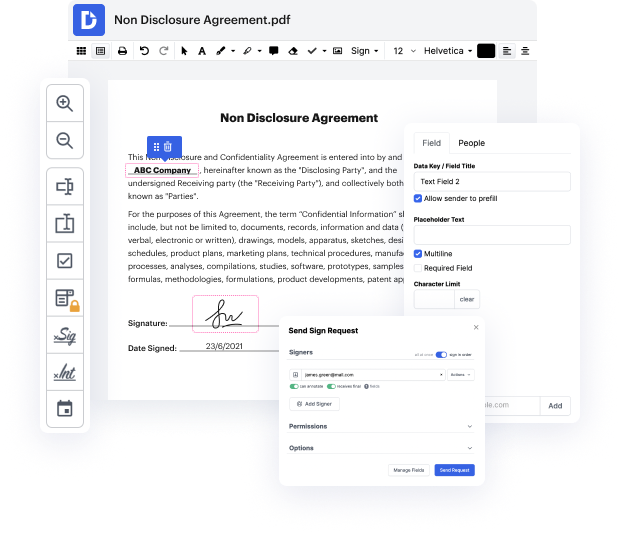

Try DocHub for efficient file management, irrespective of your document’s format. It offers potent online editing tools that streamline your document management process. It is easy to create, edit, annotate, and share any papers, as all you need to access these characteristics is an internet connection and an functioning DocHub account. A single document solution is all you need. Do not waste time jumping between various programs for different files.

Enjoy the efficiency of working with a tool created specifically to streamline document processing. See how easy it really is to edit any file, even when it is the very first time you have dealt with its format. Register a free account now and enhance your whole working process.

Today were going to take a look at a very common task when it comes to cleaning data and its also a very common interview question that you might get if youre applying for a data or financial analyst type of job. How can you remove duplicates in your data? Im going to show you three methods, its important that you understand the advantages and disadvantages of the different methods and why one of these methods might return a different result to the other ones. Lets take a look Okay, so I have this table with sales agent region and sales value I want to remove the duplicates that occur in this table but first of all what are the duplicates? well if we take a look at this row for example and take a look at this one, is this a duplicate? no right? because the sales value is different, but what about this one and this one? These are duplicates. What I want to happen is that every other occurrence of this line is removed. I just keep it once in the end re

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more