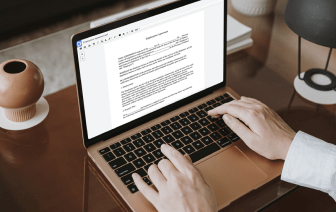

Selecting the ideal document administration platform for the organization can be time-consuming. You must assess all nuances of the platform you are thinking about, compare price plans, and stay aware with safety standards. Arguably, the ability to work with all formats, including NEIS, is vital in considering a solution. DocHub provides an substantial set of capabilities and tools to ensure that you deal with tasks of any complexity and take care of NEIS formatting. Register a DocHub profile, set up your workspace, and begin working with your documents.

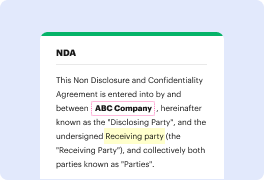

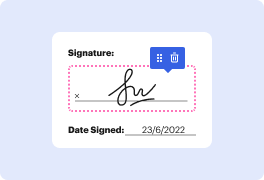

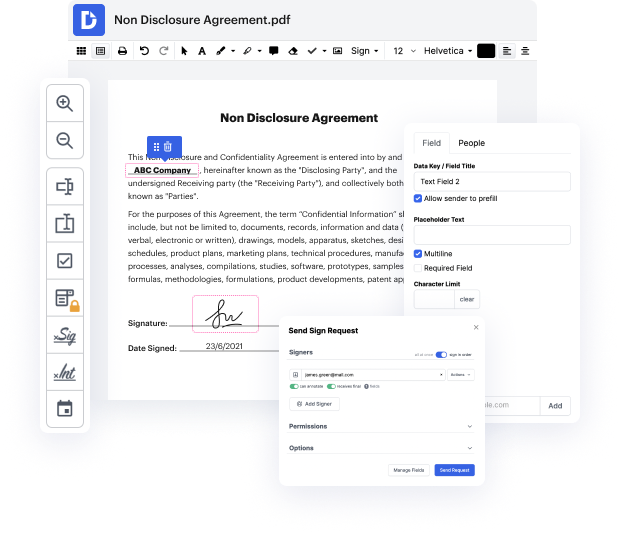

DocHub is a comprehensive all-in-one program that allows you to edit your documents, eSign them, and create reusable Templates for the most frequently used forms. It provides an intuitive user interface and the ability to manage your contracts and agreements in NEIS formatting in a simplified mode. You do not have to worry about reading numerous guides and feeling anxious because the software is too complex. embed size in NEIS, delegate fillable fields to selected recipients and gather signatures quickly. DocHub is all about powerful capabilities for experts of all backgrounds and needs.

Boost your document generation and approval operations with DocHub right now. Enjoy all of this with a free trial and upgrade your profile when you are ready. Modify your documents, create forms, and learn everything you can do with DocHub.

If I say the cat purrs or this cat hunts mice, its perfectly reasonable to also say the kitty purrs or this kitty hunts mice. The context gives you a strong idea that those words are similar. You have to be catlike to purr and hunt mice. So, lets learn to predict a words context. The hope is that a model thats good at predicting a words context will have to treat cat and kitty similarly, and will tend to bring them closer together. The beauty of this approach is that you dont have to worry about what the words actually mean, giving further meaning directly by the company they keep. There are many way to use this idea that similar words occur in similar contexts. In our case, were going to use it to map words to small vectors called embeddings which are going to be close to each other when words have similar meanings, and far apart when they dont. Embedding solves of the sparsity problem. Once you have embedded your word into this small vector, now you have a word representa