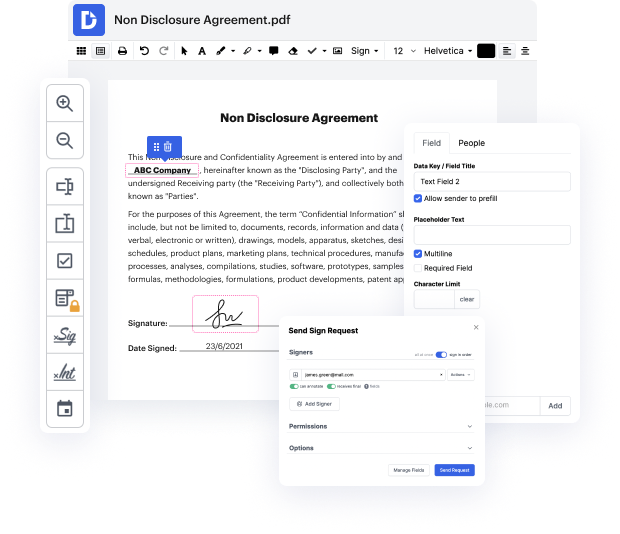

Have you ever struggled with editing your Text document while on the go? Well, DocHub has an excellent solution for that! Access this cloud editor from any internet-connected device. It allows users to Embed register in Text files quickly and anytime needed.

DocHub will surprise you with what it offers. It has powerful capabilities to make whatever updates you want to your paperwork. And its interface is so easy-to-use that the entire process from start to finish will take you only a few clicks.

Once you complete adjusting and sharing, you can save your updated Text document on your device or to the cloud as it is or with an Audit Trail that includes all modifications applied. Also, you can save your paperwork in its original version or turn it into a multi-use template - accomplish any document management task from anywhere with DocHub. Sign up today!

In this NLP playlist we have covered the text representation techniques from label encoding to TF-IDF Today we are going to talk about word embeddings. There are certain limitations of Bag of words and TF-IDF which we have discussed in previous videos, which is the vector size can really be big for bag of words and TF-IDF model. And it may consume lot of compute resources, memory and so on. Lets say you have vocabulary of 200,000 words or 100,000 words each vector for each of the documents would be 100 000 size and that that may be too much and the presentation is sparse meaning in that vector most of the values are 0. So it is not a very efficient presentation. The other problem we saw was that lets say you have 2 words I need help, I need assistance these are similar sentences. You expect that their vector representation should be similar, but since these are TF-IDF and bag of words are count based methods, the vector representation might not be similar. Here you can see see there

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more