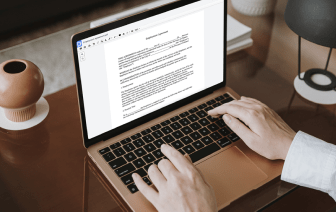

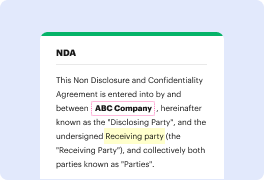

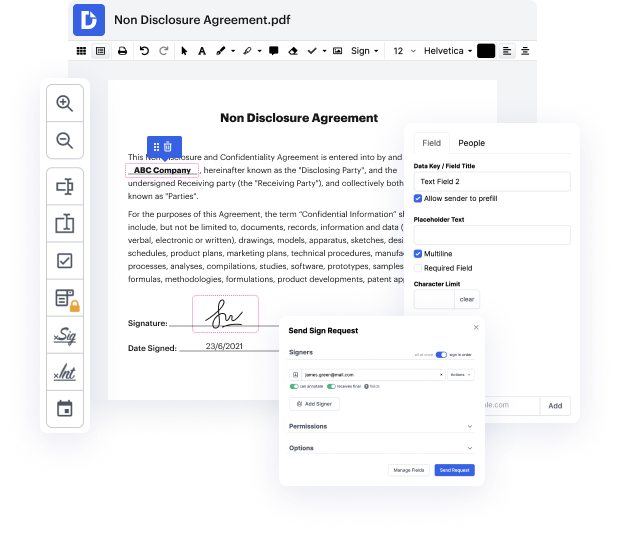

Have you ever struggled with modifying your HWPML document while on the go? Well, DocHub has an excellent solution for that! Access this online editor from any internet-connected device. It allows users to Embed identification in HWPML files rapidly and anytime needed.

DocHub will surprise you with what it provides you with. It has robust capabilities to make whatever updates you want to your forms. And its interface is so simple-to-use that the entire process from beginning to end will take you only a few clicks.

Once you complete editing and sharing, you can save your updated HWPML document on your device or to the cloud as it is or with an Audit Trail that contains all adjustments applied. Also, you can save your paperwork in its initial version or convert it into a multi-use template - accomplish any document management task from anywhere with DocHub. Subscribe today!

word embeddings are mathematical representations of text but of course that is easier said than done so in this video lets learn what word embeddings are how they are created and how you can start using them so the first question to arise of course is why do we need text embeddings at all well the problem is when youre working with nlp models you are working with text and text is not good for machine learning models they cannot deal with text they dont know what to do with it but what they know what to do with is numbers so thats why you have to represent your text in a numbers format there are a bunch of ways how you can represent your text data and its not only embeddings you can try using a hat encoded approach you can try using a count based approach or of course you can try using embeddings but before we get into embeddings lets learn what what one hot encoding is and also count based approaches are you might have heard of one hot encoding in other contexts too but in this c