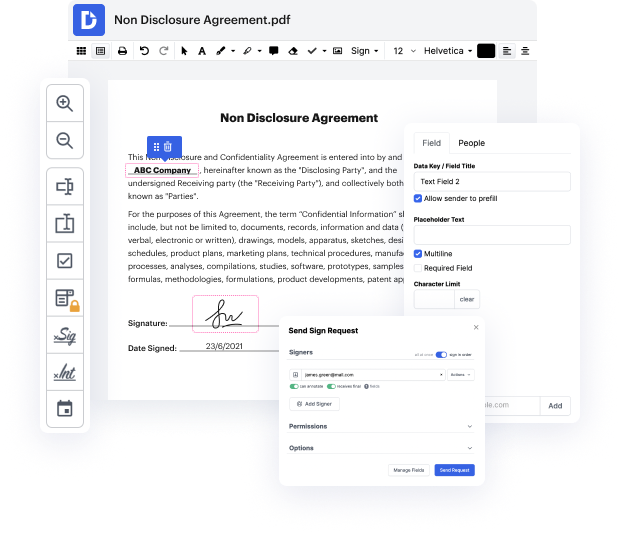

There are so many document editing tools on the market, but only some are compatible with all file formats. Some tools are, on the contrary, versatile yet burdensome to work with. DocHub provides the solution to these challenges with its cloud-based editor. It offers powerful functionalities that allow you to accomplish your document management tasks efficiently. If you need to rapidly Embed character in Text, DocHub is the best option for you!

Our process is very straightforward: you upload your Text file to our editor → it automatically transforms it to an editable format → you apply all necessary adjustments and professionally update it. You only need a couple of moments to get your work ready.

After all changes are applied, you can transform your paperwork into a multi-usable template. You simply need to go to our editor’s left-side Menu and click on Actions → Convert to Template. You’ll locate your paperwork stored in a separate folder in your Dashboard, saving you time the next time you need the same template. Try DocHub today!

Hey there and welcome to this video! Today I will talk about the Embedding layer of PyTorch. Im going to explain what it does and show you some common use cases and finally I will code up an example that implements a character level language model that can generate any text whatsoever. As you can see Im on the official documentation of PyTorch and they describe the Embedding layer in the following way: Simple lookup table that stores embeddings of a fixed dictionary and size. What we can also see is that it has two positional arguments. One of them being the number of embeddings and the second one being the embeddings dimension. If I were to explain it in my own words eEmbedding is just a two-dimensional array wrapped in the module container with some additional functionality. Most importantly the rows represent different entities one wants to embed. So what do I mean by an entity? One very common example comes from the field of Natural language proce

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more