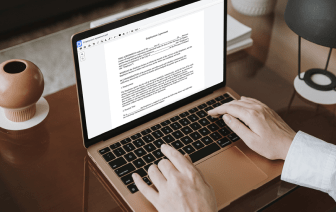

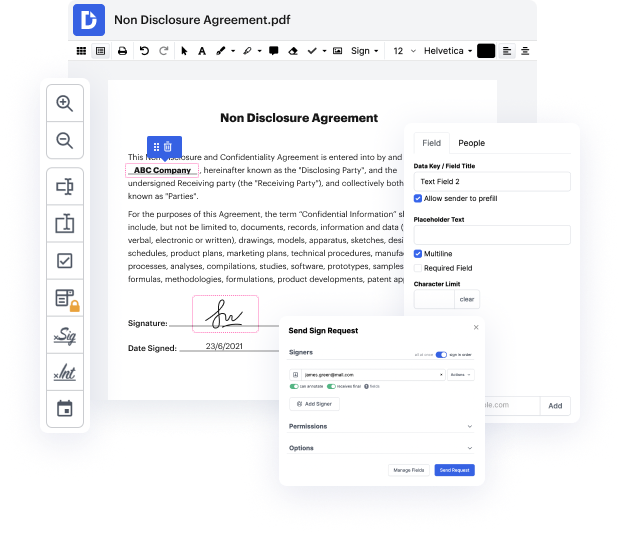

Regardless of how labor-intensive and difficult to change your files are, DocHub offers an easy way to modify them. You can alter any element in your LWP with no effort. Whether you need to fine-tune a single element or the entire document, you can entrust this task to our robust solution for quick and quality results.

In addition, it makes sure that the final file is always ready to use so that you’ll be able to get on with your projects without any delays. Our all-encompassing set of features also includes pro productivity features and a library of templates, enabling you to make best use of your workflows without wasting time on recurring activities. Moreover, you can access your papers from any device and integrate DocHub with other solutions.

DocHub can take care of any of your document management activities. With a great deal of features, you can generate and export papers however you choose. Everything you export to DocHub’s editor will be stored securely for as long as you need, with rigid protection and information security frameworks in place.

Try out DocHub now and make handling your paperwork easier!

in todayamp;#39;s short class weamp;#39;re going to take a look at some example perl code and weamp;#39;re going to write a web crawler using perl this is going to be just a very simple piece of code thatamp;#39;s going to go to a website download the raw html iterate through that html and find the urls and retrieve those urls and store them as a file weamp;#39;re going to create a series of files and in our initial iteration weamp;#39;re going to choose just about 10 or so websites just so that we get to the end and we donamp;#39;t download everything if you want to play along at home you can of course download as many websites as you have disk space for so weamp;#39;ll choose websites at random and what weamp;#39;re going to write is a series of html files numbered 0.html1.html 2.html and so on and then a map file that contains the number and the original url so letamp;#39;s get started with the perl code so weamp;#39;re going to write a program called web crawler dot pl h