Document generation and approval certainly are a core focus of every organization. Whether handling sizeable bulks of files or a distinct agreement, you need to stay at the top of your productivity. Getting a perfect online platform that tackles your most typical record creation and approval problems might result in a lot of work. Many online apps provide merely a minimal list of modifying and signature capabilities, some of which could possibly be valuable to handle TXT formatting. A solution that handles any formatting and task might be a excellent choice when deciding on application.

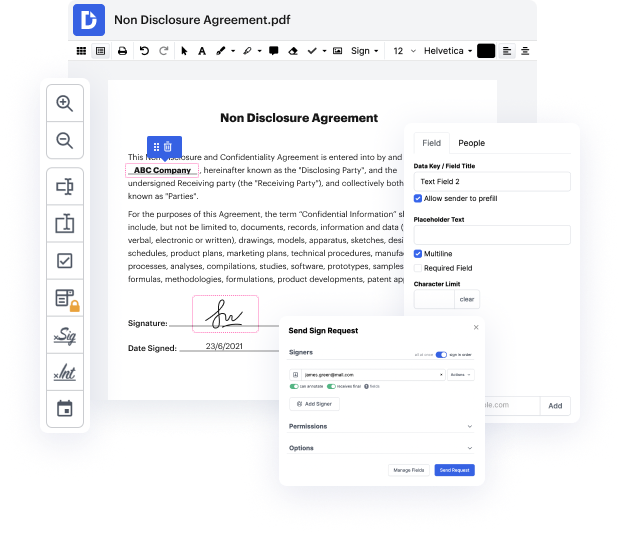

Get document administration and creation to another level of efficiency and excellence without choosing an difficult program interface or costly subscription plan. DocHub offers you tools and features to deal successfully with all document types, including TXT, and perform tasks of any difficulty. Change, arrange, and make reusable fillable forms without effort. Get complete freedom and flexibility to correct page in TXT anytime and securely store all your complete files within your user profile or one of several possible integrated cloud storage space apps.

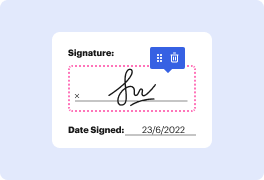

DocHub offers loss-free editing, eSignaturel collection, and TXT administration on a expert levels. You don’t need to go through tedious guides and spend a lot of time figuring out the application. Make top-tier secure document editing a standard process for the daily workflows.

how to fix blocked by robots.txt in the page indexing reports in the latest google search console in this video session im going to show you how to test troubleshoot this particular issue that your website may be having with google when youre looking in page indexing report you simply press on blocked by robots.txt then google shows you some of the urls here the first line of action obviously is press on all submitted pages in my scenario i have no issues whatsoever for the submitted pages submitted pages come from your sitemaps that means this is the map of your website youre telling google to when you use xml sitemaps so now lets go back all known pages so that i can create this tutorial for you in my scenario blocked by robots.txt error reporting is giving me some example urls here so lets press on one on the right hand side we have testroybots.txtblocking so lets press on that if youre not able to use this legacy tool then check out rank your youtube channel video session t

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more