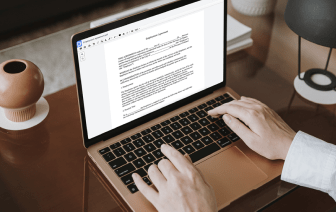

Unusual file formats within your everyday document management and editing operations can create instant confusion over how to edit them. You might need more than pre-installed computer software for efficient and fast document editing. If you need to clean up text in HWP or make any other basic alternation in your document, choose a document editor that has the features for you to work with ease. To handle all the formats, including HWP, opting for an editor that actually works properly with all kinds of documents is your best choice.

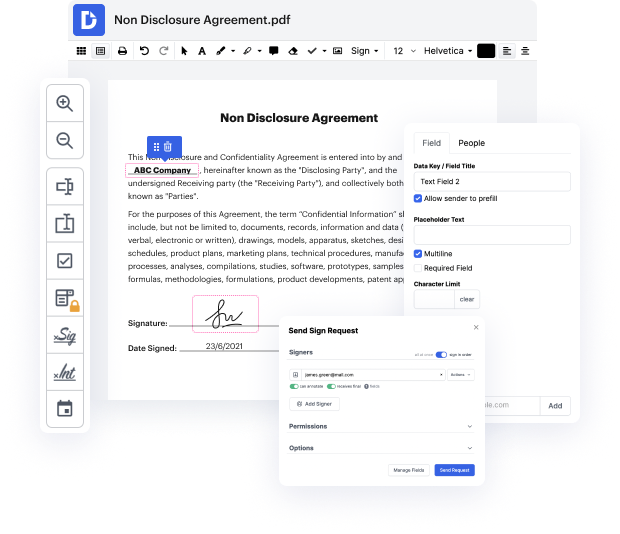

Try DocHub for effective document management, regardless of your document’s format. It has potent online editing tools that streamline your document management operations. It is easy to create, edit, annotate, and share any papers, as all you need to gain access these characteristics is an internet connection and an functioning DocHub profile. Just one document solution is all you need. Do not lose time jumping between different applications for different documents.

Enjoy the efficiency of working with a tool made specifically to streamline document processing. See how straightforward it really is to modify any document, even when it is the very first time you have dealt with its format. Sign up an account now and enhance your whole working process.

hi text cleaning is one of the major activity in a natural language processing pipeline sometimes real world data is very messy that you will spend most of the time cleaning the text before making it ready and to be fed into the model so in this video we are going to see some andy methods and functions that you can use for cleaning nlp data now it will be a combination of custom written function and in some cases it will be packages that are ready to available hand to use in your nlp pipeline so lets get started so in this case what im going to do is im going to use the well-known data set fetch 20 news groups the 20 news groups data set is available as part of scikit-learn data set so im just importing from scikit-learn data sets import fetch 20 news cube 20 news group and then what im doing is im just taking the training data set out of it there is a test as well but im just going to use the training data set i am assigning it to newsgroup underscore train i am just importing