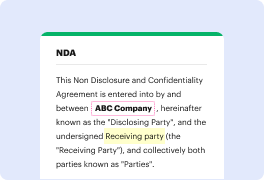

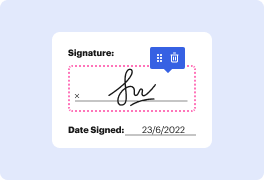

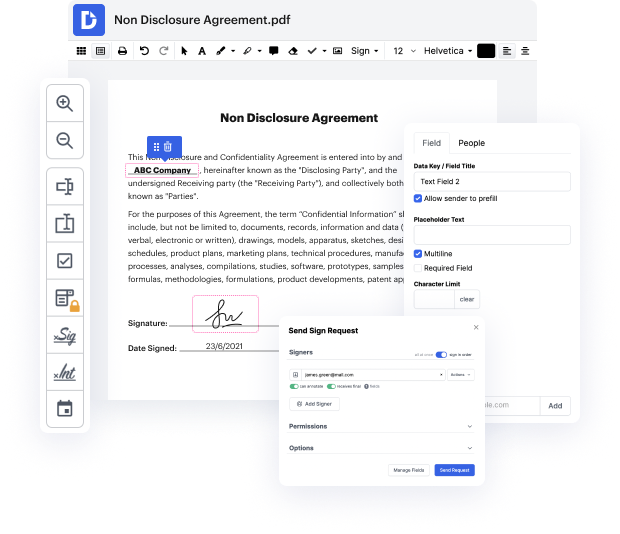

Are you looking for how to Adapt Requisite Field Bulletin For Free or make other edits to a document without downloading any application? Then, DocHub is what you’re after. It's easy, user-friendly, and safe to use. Even with DocHub’s free plan, you can take advantage of its super useful features for editing, annotating, signing, and sharing documents that enable you to always stay on top of your tasks. Additionally, the solution provides seamless integrations with Google products, Dropbox, Box and OneDrive, and others, allowing for more streamlined transfer and export of files.

Don’t waste hours looking for the right solution to Adapt Requisite Field Bulletin For Free. DocHub provides everything you need to make this process as simplified as possible. You don’t have to worry about the security of your data; we comply with regulations in today’s modern world to protect your sensitive information from potential security threats. Sign up for a free account and see how effortless it is to work on your paperwork productively. Try it now!

This text summarizes adversarial training for robust models in supervised machine learning. It explains how classifiers are trained using labeled data, such as distinguishing between pandas and pumpkins. Despite working well on natural images, these classifiers fail on adversarial examples. Adversarial examples are when a slight change in an image causes the classifier to misclassify it. The goal is to create adversarial examples that look like the original image but are classified differently. Different metrics can be used to measure the success of this.

At DocHub, your data security is our priority. We follow HIPAA, SOC2, GDPR, and other standards, so you can work on your documents with confidence.

Learn more