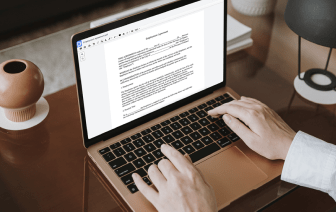

Document generation and approval are a core priority of each company. Whether handling large bulks of files or a distinct agreement, you have to stay at the top of your productiveness. Choosing a excellent online platform that tackles your most frequentl document generation and approval challenges may result in a lot of work. Many online platforms provide merely a minimal list of editing and signature functions, some of which could possibly be beneficial to deal with LWP formatting. A solution that handles any formatting and task might be a excellent choice when selecting program.

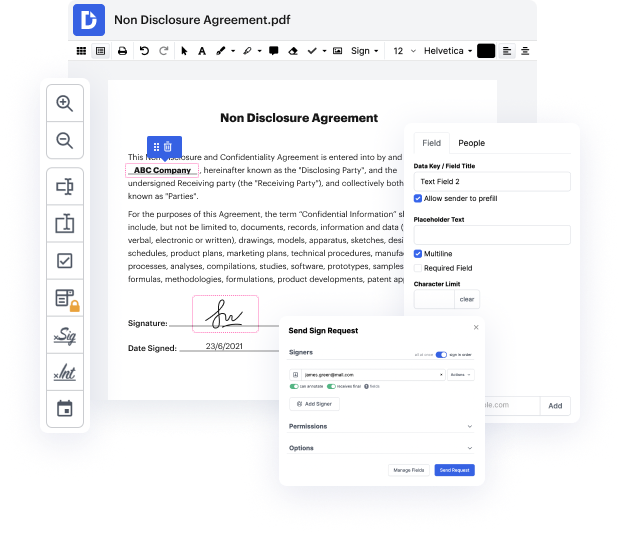

Take document managing and generation to another level of simplicity and excellence without opting for an cumbersome program interface or high-priced subscription plan. DocHub offers you instruments and features to deal efficiently with all document types, including LWP, and perform tasks of any difficulty. Edit, organize, that will create reusable fillable forms without effort. Get full freedom and flexibility to include marking in LWP at any moment and securely store all of your complete files in your user profile or one of many possible incorporated cloud storage space platforms.

DocHub offers loss-free editing, eSignaturel collection, and LWP managing on a expert level. You do not have to go through exhausting guides and invest a lot of time figuring out the platform. Make top-tier safe document editing a standard process for the day-to-day workflows.

in todays short class were going to take a look at some example perl code and were going to write a web crawler using perl this is going to be just a very simple piece of code thats going to go to a website download the raw html iterate through that html and find the urls and retrieve those urls and store them as a file were going to create a series of files and in our initial iteration were going to choose just about 10 or so websites just so that we get to the end and we dont download everything if you want to play along at home you can of course download as many websites as you have disk space for so well choose websites at random and what were going to write is a series of html files numbered 0.html1.html 2.html and so on and then a map file that contains the number and the original url so lets get started with the perl code so were going to write a program called web crawler dot pl heres our web crawler were going to start as weve done before with whats called the