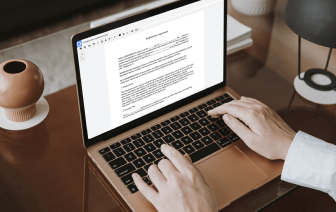

When your daily tasks scope consists of a lot of document editing, you realize that every file format needs its own approach and sometimes specific applications. Handling a seemingly simple LWP file can often grind the whole process to a stop, especially when you are trying to edit with insufficient software. To prevent this sort of difficulties, get an editor that can cover all your requirements regardless of the file format and fix writing in LWP with no roadblocks.

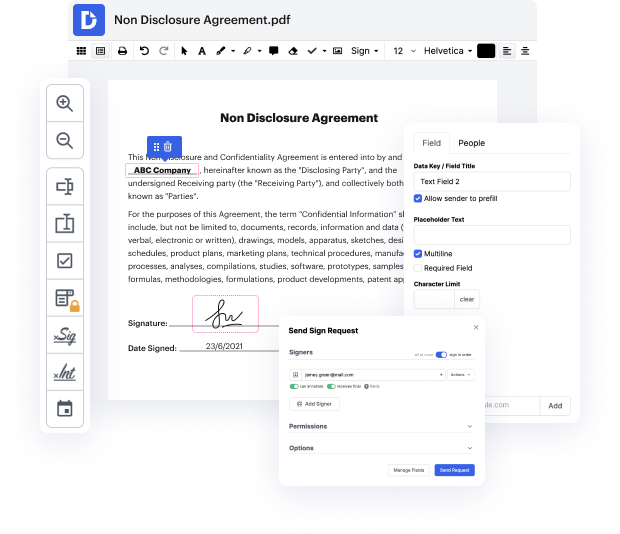

With DocHub, you are going to work with an editing multitool for just about any occasion or file type. Minimize the time you used to invest in navigating your old software’s features and learn from our intuitive user interface while you do the work. DocHub is a sleek online editing platform that covers all your file processing requirements for virtually any file, including LWP. Open it and go straight to efficiency; no prior training or reading instructions is needed to reap the benefits DocHub brings to papers management processing. Start by taking a few moments to create your account now.

See upgrades in your papers processing just after you open your DocHub profile. Save time on editing with our one platform that will help you become more efficient with any file format with which you need to work.

in todays short class were going to take a look at some example perl code and were going to write a web crawler using perl this is going to be just a very simple piece of code thats going to go to a website download the raw html iterate through that html and find the urls and retrieve those urls and store them as a file were going to create a series of files and in our initial iteration were going to choose just about 10 or so websites just so that we get to the end and we dont download everything if you want to play along at home you can of course download as many websites as you have disk space for so well choose websites at random and what were going to write is a series of html files numbered 0.html1.html 2.html and so on and then a map file that contains the number and the original url so lets get started with the perl code so were going to write a program called web crawler dot pl heres our web crawler were going to start as weve done before with whats called the