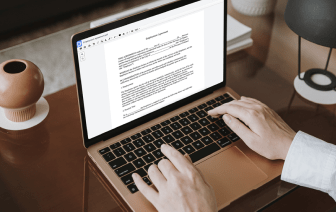

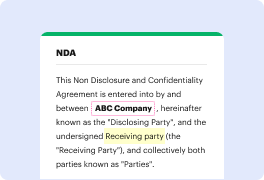

Unusual file formats within your daily document management and modifying operations can create immediate confusion over how to modify them. You may need more than pre-installed computer software for effective and quick file modifying. If you need to embed sentence in ODOC or make any other basic change in your file, choose a document editor that has the features for you to work with ease. To handle all the formats, such as ODOC, choosing an editor that actually works well with all kinds of files will be your best option.

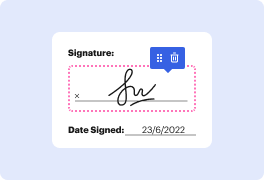

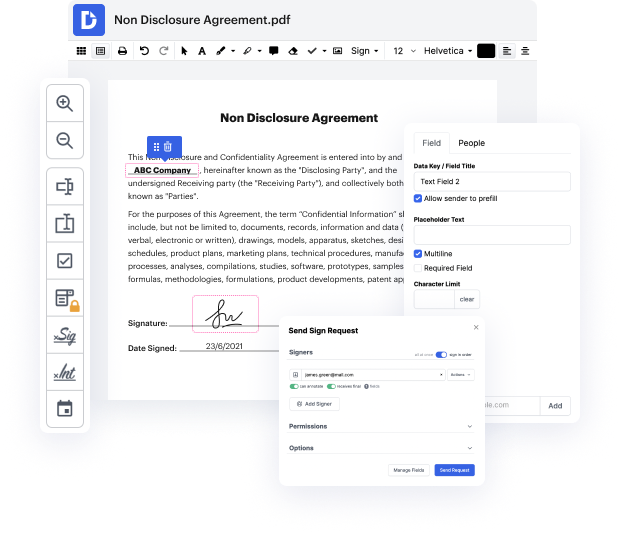

Try DocHub for effective file management, irrespective of your document’s format. It has powerful online editing instruments that simplify your document management operations. You can easily create, edit, annotate, and share any document, as all you need to gain access these characteristics is an internet connection and an active DocHub profile. A single document solution is everything required. Do not waste time jumping between various programs for different files.

Enjoy the efficiency of working with an instrument made specifically to simplify document processing. See how straightforward it is to edit any file, even if it is the very first time you have dealt with its format. Sign up an account now and enhance your whole working process.

Today, we're exploring Google's universal sentence encoder, a deep learning model that can be downloaded and used for Natural Language Processing. The encoder is considered better than traditional bag of words or word Tyvek methods. Bag of words encodes individual words, while word Tyvek produces embeddings by predicting words in context. Similar context words are mapped to similar vectors in the vector space, providing semantic meaningfulness. Models like the universal sentence encoder predict the next set of words given prior words, with variations like Bert also offering encoding beyond individual words.